12/09/2018

Spinrite Puzzle Pieces - Dynastat

The key thing is that many descriptions display the chart in monochrome or b&w and loose the ASCII "focus window" that pops up in the center of the display when it goes to work. That is "you don't see it" unless you know exactly which 8-bit graphic characters to "look for".

However the Spinrite 5 - Owner's Guide has a color snapshot, and when it runs on your computer Spinrite 5 or Spinrite 6 displays the information in full color.

Mostly its a [Target Site] like on a gun or instrument that draws your attention to just a few bits being read in a sector. Old school most sectors were 512 bits, today they are more likely to be 4096 bits.. its kind of decided by the hard drive manufacturer at the factory.. though some can be switched by hardware jumpers or firmware/software by the end user. The [Target Site] "slides" from Left to Right along the sector bit stream, with a [Very] important Left-hand [scale] which tells you some very important things.

Elsewhere in the Spinrite 5 - Owner's Guide, are drawings and descriptions of "Flux Reversals" and some theory on how they are used to store data in a somewhat loose, variable way which ends up storing digital "1's" and "0's" in an [analog] signal format on the "spinning rust". Basically the hard drive has a "cut off point" or threshold at which it decides an analog signal being read from the drive is a "1" or a "0" and reports a string of bits to an error correction engine.

Some of the bits represent a checksum and the engine can go to work reconstructing the string if it determines a read was "bad" or "no good".. if that fails.. the drive reports that sector can not be read. The drive can also try to "re-read" the sector to try blindly to get a "perfect" read.. but that seems to be up to the judgement of higher up software decision makers in the firmware, bios, and operating system... as far as the ECC engine is concerned its fixed, or its not fixed.

That [ Left-hand ] scale is an [uncertainly] scale. Close to the [Center] there is "little" or "no" uncertainty. The [threshold] reader for a single read decides there is [no doubt] or very little doubt, that a bit is a "1" or "0".

Over many successive reads of the same sector, the bits are [re-read] over and over. If they are always the same value, a "1" or "0" their uncertainty "Stays" very close to the center line, because the doubt is very small. And their "digital wiggle" tracks stay [red] for [certain].

But if over repeated re-reads those same bits sometimes "flip" from "1" to "0" or vice versa, then their [certainty] gets [more] "uncertainty" so they drift away from the center line.. in the direction that they "most" show up as.. that is "mostly a -1-" or "mostly a -0-".. meanwhile as the same sector is read over and over again to [pile up] a real-time data graph of information about the same [Target Sited] bits the levels can "change" moving up and down -- or really "closer" or "further" from the "certain-line"... and that's why the center line is labeled with a [?] its a question of how certain any one bit is and which value its appearing "mostly" as..

If this were a "horizontal tube graph" it might be more intuitive since at the extreme edges of the graph, where Uncertainty is Maxed out for a "appears 1" or "appears 0" they are just as likely to be the opposite.. so it sort of "wraps around" in uncertainty.

Put another way the y-axis Zero value is 100% certain and either side is [backing down] from 100% certain.. so its sort of like SciFi Tachyons.. you can't go at the speed of light.. but you can back down from either side of it.

Perfect "bits" don't move up or down, their certainty and uncertainty "never" change.. but real-world bits tend to change a little bit. Imperfect or "bad bits" do change (a lot) and seem to move up and down while being re-read.. and Dynastat is measuring that change to "try" and discern a "pattern" or a puzzle piece that works to solve the error correction engine puzzle and "invent" a perfect read result.

When Spinrite does solve the puzzle it "lets" go the sector and lets the drive "re-locate" the bits in that sector to a spare sector of the drive, so the result of the puzzle solving is not lost. (of course by accident, a good read "might" happen out of all the repeated reads.. that also "lets go" and get relocated immediately.. if at the end nothing comes up.. then it makes a guess and manually writes the guess and relocates)

Normally - Dynastat stays "laser focused" on a small number of bits [centered - horizontally] in its "sites" and the bits on either side do not move. But when Dynastat is not being used, it strums through the string of bits displaying them as a red beaded line appearing to ramp up and down. The uncertainty doesn't really matter as long as the error correction engine is still reporting a sector as "good read" and nothing happens.. error engine is happy, spinrite is happy.. only when the gyrating produces a "bad result" according to the checksums does Dynastat swing into action and "focus" on a particular region.

But this is very confusing to the average user.. the hypnotic rhythmic march of the beaded red line (which is a "read" line) can stay close to the center line of "certainty" or veer wildly from it depending on the age and freshness of the Magnetic Flux "fade" but as long as it doesn't trip the error correction warning klaxons all is well.. so its really meaningless unless the error correction bits do not return a "good read" result. When things go bad though, the display changes and the Site Scope pops up in living color and zeros in on the bad bits and starts re-reading that sector and displaying only those "few" bits that have been determined to be "variable" or causing the problem.

As the alien "blue-green" bit snake wiggles back and forth.. Spinrite does battle with the Cyan data dragon wresting your data from oblivion... or that's how I like to picture it.

Maybe Spinrite's official mascot should be a blue-green dragon with a 'Dirk the Daring' knight to save the day?

I've no special knowledge of whether my interpretation is rite or wrong. I don't know GRC or have any other references regarding the program, I use it and have wondered about Dynastat for a long time.

This is my online blog and I'm pretty sure no one reads it.. so if I guess wrong its pretty harmless.

Mostly these are just my personal notes on the matter.

ps. another mental picture

If you had a thousand piece Jigsaw Puzzle and were close to finishing. Then discovered only one piece was missing.. you could give up. Or you could look at the shape of the Puzzle Piece, and all of the surrounding puzzle pieces and the pictures on them and try to "invent" or "make up" your own replacement puzzle piece from all of the evidence available to you. You could cut it out of thin cardboard, draw a picture on it that sort of looks similar and try over and over again.. until you felt it was perfect (or good enough) match.

In the world of data, usually many layers of redundancy exist far above the actual bit layer. In a picture for example, rarely does any one bit matter.. the human eye tends to "gloss over" or fill in the blanks visually from intuition. Databases tends to have redundant file system error correction mechanism based on parity or additional copies of the data in buffers and caches which will automatically be referred to upon discovering a problem at those higher layers. And people are suppose to actually have "backup" copies of their data..

So its kind of like from the Quantum level to the Macro level, that we all live in, we have built a digital world on top of a sea of ever shifting sand.. which by its very nature is "random" and unpredictable.

The Universe at large is also like those "certainty" graphs.

Close up and within our Solar System we are very certain of things, even closer.. on the tip of our nose.. we are "very certain" of things.. but looking at a distant star.. or the edge of the Universe with a telescope? Our Uncertainty grows very large.. and in every direction we come to an infinite uncertainty that we assume "wraps around" us like a bubble in every direction in space.. and the bubble extends even in the direction of time. We are in a nearby bubble of "certainty" in a ocean of "uncertainty" which wraps around us like an all enveloping envelope.

The Big Bang was a point of "certainty" like the center line in the Dynastat graph.. it was we think the "most certain" point in all of creation.. everything further away from that in time or space has been less and less certain and more "variable".

The Quantum realm itself didn't exist in the moment of the big bang.. there was no room in space, no room in time for it to exist.. there was no quantum uncertainty. But as the universe unwound, or unfolded like a developing flower.. it spun out intricate and seemingly infinite complexity like a fractal.. and more and more quantum levels and states became apparent.. more and more uncertainty developed.

Its almost like the Third Law of Thermodynamics, that everything degenerates or declines is an admission that as the universe evolves it becomes "more complex" because "there are more possibilities" or more uncertainty.

And that's kind of the magic link between quantum effects of the very small and the gravity effects of the every large.. its that one evolved from the other.. as gravity relaxes its grip, entropy grows and as entropy grows.. so does uncertainty.

or even simpler.. bring a lot of people together to build a town with a core.. and spreading out from that core will be ever more complex neighborhoods and organized regions with "some level" of influence over all of the others through the shared core.. but they will also have regional differences and complexities.. different quantum realms.

what was the saying?

The future is here, its just not evenly distributed..

9/26/2018

JVC Compu Link, a brief history of time and space

JVC Compu Link (also called SYNCHRO 'terminal') was a simple point to point or later disjointed daisy chain method of connecting many Audio (only) devices together in a chain of devices.Initially TAPE (Cassette), CD (player) and MD - MiniDisc (recordable) to each other and to an AMP (AM/FM Receiver, Amplfier, Source switcher with IR remote receiver).

The protocol seems to be based on a 8 bit data frame with stop (or possibly a Parity) bit, at 100 or 110 baud (bps) in which 3 bits represent a Device, and four bits represent a state or 'command' from or to an addressed device. The intial cable was described as a [monoaural Ring/Tip miniplug] carrying logic levels of 5 volts or 0 volts refrenced to Ring ground. And normally [High] meaning a Pull-Up resistor was used to maintain a reference when not transmitting data.

Compu Link had (four) generations starting from about the year 1991.

Compu Link - I (version 1) was described as having the ability to emit or receive a [Start or Source] command along the connection to other devices. If [Play] was pressed on an Audio device it would emit a [Switch to Source command indicating itself, to an assumed AMP connected through the Compu Link connection] the AMP would then switch its Source to the Device requesting attention and shutdown any other playing Source. Alternatively an AMP could issue a [Start or Play] command to a particular Device naming it in the first three bits of the data frame.

Compu Link - II (version 2) was described as having the same capabilities but layering on additional features, there by maintaining backwards support for previous Compu Link - 1 Devices connected to Compu Link - II terminal ports.

Compu Link - III (version 3) gained [Stand By] or [On/Off] feature allowing the AMP to place a device into an On or Off mode called Stand By.

Compu Link - IV (version 4) gained the ability to coordinate Record/Pause and Playback between Audio components, in which a recorder was loaded with blank media, record and pause buttons pressed and then a seperate source signal component was set to play, it would inform the AMP to switch to it as the source.. the AMP would inform the recorder that playback had begun and release the pause to begin recording.

The Compu Link terminal ports, or mini-plugs were mono-aural (only one Ring, so only one signal path per connection) and to connect one to an AMP only one per device was required, however to daisy chain from for example a TAPE, to CD player and then to an AMP the CD player would be expected to have two Compu Link terminals, one could be used for connection to the TAPE device and would convey its signals to the CD player which would also be connected by its second Comp Link terminal to the AMP.

Early AMPs were mostly AM/FM radio receivers which shared their speaker connections and allowed switching the source, later including advanced audio mixers for equalization and mixing signals. Compu Link made them intelligent and able to respond and command various Audio connected components. Including acting as a Master IR remote receiver which could relay commands from the IR remote along the Compu Link connections to the various Audio Devices with a single remote. note: it did not relay demodulated "IR" codes, as with some other manufacturers, rather it issued legitimate Compu Link protocol codes targeted for that type of connected Audio device.

When MD - "mini-disc" player/recorders came along the ability to copy and record signals to digitial media opened up the ability to Read and Write [TEXT] from areas of the Disc. For this a new version of Compu Link called [TEXT Compu Link] using "bi-aural" mini-plugs or [Tip, Ring, Ring] was created and made using a {green colored} jacket. Early AMPs supporting this displayed the TEXT on Vacuum Fluorscent Display tubes called (VFD). Later Audio/Video AMPs would display TEXT Compu Link in TV On Screen Displays integrated in the video display menus on the TV. Early methods of entering TEXT were performed using a Qwerty alphanumeric keyboard in the remote, and were later replaced by On Screen Display navigation of a Video keyboard on the TV screen using remote control arrow keys.

When Video source became available and an Audio/Video switcher was added to the AMP another new version of Compu Link called [AV Compu Link] using another set of mini plugs was created. There were three (I, II, III) versions of AV Compu Link. In addition TVs were given AV Compu Link terminal ports, and or Compu Link EX terminal ports. The functions included a similar ability to set the Video Source of the AMP (now called generically the Receiver) either by turning on a Video source, or by turning on the TV. The "Receiver" would then use the commands from the Video sourcer or the TV to set the appropriate paths for video and audio through the receiver to the TV for playback. Like MD, this began with VCR, DVD sources and progressed through recordable versions.

The Compu Link family of protocols were a Home Theatre set of protocols to coordinate signal source and choice using a central Master device called the AMP or Receiver which could also consolidate the remotes into one remote for the Receiver to control all of the other Audio and Video components to the Speaker and/or the TV display device.

JVC JLIP (Joint Level Interface Protocol) - J-Terminal

The JVC JLIP protocol was a circa 1996 pseudo serial protocol over an RS232 connection between devices and a PC serial port. Capable of send and receive of targeted master slave communications.

It is possible the name "Joint Level" - "Interface Protocol" refers to the use of "Junction Boxes" to share a single Serial connection with a PC among many J-terminal or JLIP enabled devices. Each connection between a J-terminal device and a PC was intended to be "point to point" however, to share that connection a breakout box or "device" with two or more simultaneous connections to the same Serial uplink to the PC were necessary. Thus to arbitrate between two or more simultaneously connected devices when passing messages each device must be assigned a unique Identifier or "ID" and that must be encapsulated in the protocol used at the "Joint Level". Since the "Junction Boxes" are essentially passive and contain no intelligent routing decision engines, each device itself must perform the decision or inspection of each message packet in order to determine when or if a particular message packet is intended for itself or is part of an ongoing conversation between itself and the PC. -- an equally descriptive title could have been "Junction Box Interface Protocol" however it is likely the protocol was developed before the junction boxes and hence the naming refers to an as yet to be defined interface device to accomodate the protocol.

It was used as an alternative to the IEEE1394 or "Firewire" standard as a proposed method of locally networking a number of audio/video and multimedia printer and video frame capture devices with point to point connections.

Because it was a master/slave systems targets are treated as resources addressed individually by the master (PC) using embedded "ID" codes in the frames used to pass messages to and from the targeted slave devices connected to the bus or the PC over the electrical signaling connection. These "IDs" were often self-assigned from within the menus or user interfaces inside the JLIP devices themselves with faceplate buttons or onscreen menu options using the devices IR remote. For example VCRs with a JLIP port (aka.. a 'J-terminal') had an onscreen menu location for manually 'setting' the JLIP ('ID') value.

Each frame of the JLIP protocol consisted of 11 bytes with a checksum at the end to help managed frame or packet corruption during transmission or reception. Various return bytes would acknowledge receipt of the command or status.

The protocol was not widely published and only deciphered by observation a few times. It was in fact a Patented and Proprietary protocol that belonged exclusively to JVC.

The "uses" to which this protocol was put were several:

1. for managing camcorder or vcr playback and record and positioning for assemble and insert editing (dubbing) with a second playback or recording deck, if used with a special capture box to pass video through to an ouput and then input into the recording video deck, transitions and screen wipes could be inserted into the video stream.

2. for coordinating audio/video equipment like camcorders or vcrs in order capture still frames and upload them to a PC or print them to special photo paper

Camcorders often came with several 'ports' including JLIP electrical ports, PC serial ports ran at traditional RS-232 full voltage swings of 12 volts +/- while JLIP ports ran at newer smaller device TTL small voltage swings of 5 volts +/- 0 volts. A JLIP to PC cable therefore needed a voltage level converter to allow a JLIP device to communicate with a PC. However a JLIP port enabled device could also have a second JLIP port in order to daisy chain the device with up to 50 or 63 other daisy chained devices..(these "splitter" boxes were called "JLIP junction boxes".. only a very few were ever made) this was similar to the way a USB port, hub and device daisy chains would be possible later. When connecting a JLIP device to a JLIP device a special canble with no level converter could be used. A special PC to JLIP port cable contained a level converter and was only needed with the first JLIP device in a chain. A JLIP device which connected to other JLIP devices downstream was called a 'Junction Box' somewhat similar to the terminology used with USB hubs today. Unlike USB however, power was not conveyed from the PC to the JLIP device and all such JLIP devices and JLIP Junction boxes had to be 'self' powered.

In theory a camcorder with both a PC port and a JLIP port could be used as a 'Junction Box'.

At this time USB was either too slow, too new, or not widely accepted and was not added to camcorders and other devices until many years later..

JVC released several standalone software/cable JLIP packages to partner with or use with equipment it made with a JLIP interface port. The JLIP port was identified by the stylized italic "J" symbol inside a purple box and later referred to retroactively as a [ "J-terminal" ] to more readily identify it as belonging to a class of commerical control ports similar to other commerical remote control management ports by other vendors like LANC, SLink, or AVCompulink

These were:

JLIP Player Pack - HS-V1UPC for Windows 3.1 on 3.5 inch floppy disk only

JLIP Capture Pack - HS-V16KIT for Windows 98/95 on CDROM disc only

("possible" release history)

HS-V1KIT = JLIP Video Movie Player Ver 1.0

HS-V2KIT = JLIP Macintosh (Japan only)

HS-V3KIT = JLIP Video Capture Ver.2.0

HS-V5KIT = JLIP Video Producer Ver 1.1

HS-V7KIT = JLIP Video Capture Ver.2.1

HS-V9KIT = JLIP Video Capture Ver.3.0, JLIP Video Producer Ver.1.15

HS-V10KIT = JLIP Video Capture Ver.3.1, JLIP Video Producer Ver.1.15

HS-V11KIT = JLIP Video Capture Ver.3.1, JLIP Video Producer Ver 2.0

HS-V13KIT = JLIP Video Capture Ver.3.1, JLIP Video Producer Ver 2.0

HS-V14KIT = JLIP Video Capture Ver.3.1, JLIP Video Producer Ver 2.0

HS-V15KIT = JLIP Video Capture Ver.3.1, JLIP Video Producer Ver.2.0

HS-V17KIT

Video Movie Player - was essentially an MCI interface for remotely pressing buttons and capturing to a video printer

Video Capturer - was esentially a Still Frame capture to BMP or JPEG with photo transfer over the JLIP serial connection to the PC

Video Producer - was essentially a Linear A/B Roll "Edit Decision List" video editor for dubbing segments from multiple sources to a single recorder

Picture Navigator was an early Photo Collection manager with an Album interface for filing captured stills.

Most of this made more sense when used with one or more Camcorders and VCRs to produce video on a limited budget and with an analog linear editor system,The introduction of the DV firewire capture, transfer and whole digitial workflow a few years later would become more popular and replace it. This was an [entirely] Analog Linear Assemble workflow.

Which were basically for coordination and control of a remote controlled camcorder or vcr with a 'J-terminal' port. Capture was controlled after the media was properly positioned and triggered by a seperate signal to a video capture device like the Video Capture Box (which was also a J-terminal junction box from 1 to 2 JLIP ports) or the Video Multimedia Printer (which had only 1 JLIP port)

JLIP Video Capture Box - GV-CB3U for stills or clips to files for upload to the Internet

JLIP Multimedia Printer - GV-PT2U for video capture to paper for publication or archiving

Advanced JLIP control

The process of finding, capturing and printing video pictures is facilitated by JLIP (Joint Level Interface Protocol), designed by JVC to enable efficient bidirectional control of AV equipment as well as to provide computer connectivity. In addition, the GV-PT2 can be connected to such JLIP-ready video hardware as the CyberCam Series of digital camcorders--the popular GR-DV1 or the recently announced GR-DVM1D--and used in conjunction with special JLIP- based software to create an advanced multimedia system totally controlled from the computer. With the mouse, the user can control everything from camcorder playback to printer memory functions, enjoying such convenient features as Scene Playback, which facilitates finding and printing just the right picture. Also, after a video editing session, the edit-in points can all be printed automatically.

Printing from a video source

When connected to a VCR or camcorder, the GV-PT2 can store image data in its field/frame memory and print directly from that. Using either the printer's own control buttons or the remote control unit (supplied), the desired scene is selected. Prior to printing, the user can choose from a number of design elements to personalize any scene.Video Production equipment like the Datavideo SE-200 could also use the JLIP port to take control and manage the queuing and edit decision lists possible with assemble and insert editing pseudo-non-linear editing while creating new video clips and sequences of video programming on VHS or other tape media.

Essentially it was a proprietary master/slave RS-232 protocol at (TTL level 5 volts) for addressing devices on a p-t-p or chained or shared serial bus for the purpose of remote control and triggering events like power on/off - play/record - start/stop. The JLIP user interface software presented a video control deck interface graphically which scanned the bus and identified connected devices and allowed selecting one to work with and send and receive messages to and from. Secondarily it could capture stills from a capture box and return those to data files on the PC over the JLIP serial connection, or send data to the frame buffer of a printer connected by the JLIP serial connection.

The JLIP communications protocol however was binary and checksumed so some sort of custom proprietary communications client had to be used other than a simple ascii terminal program.

A JLIP Debug progam was written and released to monitor and originate JLIP commands to and from devices connected to a PC

JLIPD 1.0 beta released 2000-11-18

Documentation for the actual commands is hard to come by and often reverse engineered by observation of the interaction of a J-terminal connected JLIP device with JLIP control software.

JVC XV-D701 DVD player

Marantz 8300P D-VHS recorder

Helpful sites:

http://www.pixcontroller.com/Products/PixU_JLIP.htm

http://www.remotecentral.com/cgi-bin/mboard/rs232-ip/thread.cgi?179

http://garfield.planetaclix.pt/Entrada.html

JLIP and the JLIP logo are registered trademarks of JVC

addendum:

The JLIP Player Pack software was included on a 3.5 inch floppy diskette and bundled with a JLIP "junction box" and JLIP and PC cable(s) such that a PC could connect via a JLIP connector to the Junction Box and then split out into connections to two other JLIP devices with J-terminal ports. -- This would work to connect for example a camcorder (acting as video source) and a vcr (acting as video recorder). The JLIP Player software would then act as a software "Control Editor" which could queue up scenes on the camcorder and stage their playback to the paused vcr and "execute" the [dubbing] from the camcorder to the vcr. At points where a [tape switch] was necessary in the camcorder, in order to coordinate additional scene dubbings it would prompt for a new tape which would be manually swapped.

The JLIP Capture pack software was included on a CDROM disc and bundled with JLIP and PC cables but no junction box. The junction box "splitter feature" was combined inside a separate "Capture Box" which was sold separately. Standalone the Capture pack could control one video source which may or may not include a junction box "like" feature of a a second J-terminal to connect additional JLIP devices. Confusingly this made many purchase combinations possible and worked the junction box feature downstream and into more dedicated function devices. A single PC could then install the Capture pack software and use that with other JLIP devices to manage them in other ways. For example the Capture pack included two software packages and the PC cable, JLIP cable and an Edit cable.

The first software package was intended to be used with a Video Capture box to queue up a video source like a camcorder and take still snapshots of the video and then upload those over its Serial connection to the PC to be stored and managed in a Photo Album. It never had the ability to capture video segments, but rather created index images of a sort. Likewise it could be used with a similar dye sublimation video capture and print device, which was designed to queue up a video source and take still snapshots of the video and hold those in printer memory for editing, uploading to the PC or printing them to special thermal paper.

The second software package was intended to be used as a more traditional assemble video editor with software decision list. It could mark In and Out for segements of a video source tape and create a decision list. Then the decision list could be edited offline to included transitions and wipes, if played through a special video capture box and dubbed to a video recorder, which was also controlled by the JLIP software a junction box or direct connection. Addtional video source tapes could be supported by adding a tape swap step in the edit decision list.

While archaic by todays standards with USB or even IEEE1394 "firewire" this took place in 1996 on Windows 3.1 and Windows 95/98 PCs, which was miraculous for the time.. and probably led to the eventual feature set that USB Serial connections would be known for a few years later. Certainly the Controller, Hub and Device hierarchy is quite familar, although JLIP did not include a shared power source and instead of high speed serial frames, relied upon the more traditional RS-232 start, stop, byte frame protocols with additional procedural protocols layered upon the original ASCII 8 bit free form protocol.

It echoed many of the features of 10baseT Ethernet as well including limited station numbers and an initialization phase for identifying all stations on the local network of devices.

9/10/2018

LRM-519 enabling S-Video recordings

However, a previously configured hard disk from an LRM-519 that was setup with Satellite Input before the Guide service was discontinued can be cloned and inserted in another LRM-519 to enable it to be used as an S-Video or Composite recorder.

Manual recordings from the Satellite inputs are recorded in MPEG2 format, which can then be sent to a PC file share.

9/04/2018

empia 2861, Startech SVID2USB23 enabling audio capture

The C:\Windows\inf\usb.inf file is ('missing') and it will not be detected as missing even with sfc scannow.

"The usb.inf file is also not one of the 3498 files that Windows File Protection looks after, so there is no backup copy on your system."

Many back up copies exist on your system, a file search for "usb.inf" with a tool like voidtools "Everything" will find them for you, and possibly Windows search targeting the C:\Windows subdirectory (although I gave up on Windows Search long ago and disabled it).

Simply copy one of the usb.inf "backups" into the C:\Windows\inf directory and unplug and re-plug in the 2861 device (SVID2USB23).

The first device that appears in the "Sound video and game controllers" represents the first Endpoint in the cable dongle, the video capture device.. the sound device is the second endpoint on the bus and fails to appear without usb.inf.

Once the usb.inf is in place the first endpoint will be detected as [ Imaging Device 2861 ] and the second endpoint as [ USB Audio ] and appears as [ Line (2861) ] in the Windows Sound Audio Mixer "Recording Devices".. a correct "Update Driver" and installing the EMAudio driver will convert that into Sound device (2861 Audio)

VLC is one of a few programs which can properly enumerate USB Audio device as selectable Audio Input for recording or playback. Many legacy audio capture programs will simply list "Master Volume" and not give you a choice from all available audio sources. Without this level of control.. the Audio Input from the USB audio cannot be turned on or have its levels set.

The SVID2USB23 aslso has S-Video and Yellow Composite Video inputs.. if you recieve a black blank screen on recording it may need its crossbar video input switched over to the S-Video Input to cpature video signal.

More usb.inf info here:

USB Generic parent driver

And more specifically here

Tools

USBaudio

LRM-519, enabling SMB file share uploads

In addition, the IPSec MMC plug-in which can be exposed (only) by creating a new MMC console and then Adding the IIPSec plugin can host a rules called [ WND ] which will block SMB connections by default and enormously slow down "Kerberos" authenticated logins, or any password authenticated logins.. in fact it will stop "correct" username /password authenticated logins in many cases.

The problem appears to be enforcement of a "Kereberos" specifc rules that lingers after passing through a Domain joined phase.. or because of an erroneous windows patch, which happens quite often.

Rather than reinstalling the machine, created a new mmc.exe instance, add in the IPSec ruleset plugin (which is not the same thing as the Firewall IPSec plugin, nor is it the same thing as the IPSec VPN connection tool.. this is a legacy totally off the radar plugin, which also buries and does not expose is rules sets.. unless it is specifically exposed by digging in the IPSec plugin.. all rulesets appear blank until highlights, and the Inbound set is clicked on and then refreshed.. quite hidden.. quite bad programming.

8/20/2018

HP Stream N3050 Braswell, booting Win To Go

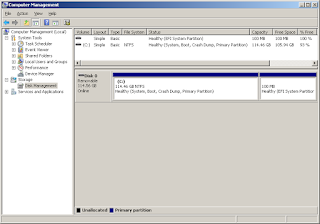

The BIOS defaults to booting Win10 from a GPT-EFI partition and runs Win10 from an NTFS partition. The NTFS partition is divided into two volumes on an eMMC - electronic mult-media memory chip embedded on the main/motherboard.

Unlike Chromebooks which have a stripped down and bare bones BIOS, the Stream book has full features for booting from an MBR or GPT partitioned style storage device, using an eMMC or a USB bus device connected to the main/motherboard.. but it cannot boot from a microSD storage device deven though it has an SD memory slot.

What that means is the BIOS will scan those two buses eMMC and USB for storage devices and then look into devices on them for MBR or GPT partitioned disks.

When it does any MBR or GPT partition device with either an "Actrive" or "EFI" marked parition will be listed in its selectable boot menu "esc-F9" and can be chosen to attempt an operating system boot.

MBR is a four Primary partition, and Logical parition mini-index format description system at the top of the drive for locating an "Active" boot partition within the first 2 TB of an LBA - logical block access drive..

Limited by either the sector size and/or the number of blocks that a 16 bit BIOS can access.

The MBR slots contain the actual position within those blocks where the first boot sector for an Active partition can be found. It automatically loads the first few blocks into memory and gives control over to them by setting the CPU program segment pointer to the first one and performs an execute.. generally its a tiny machine language program that "bootstraps" by loading the next few sectors which is a filesystem driver for accessing "that" partitions "specific" filesystem and scans for the second stage bootloader for the pre-loader for that operating system.. the "preloader" generally does things specific to the operating system like preloading device drivers the kernel will need in memory in order to access the rest of the storage device.

Then the preloader loads the kernel into memory and jumps to its startup routine. The operating system decompresses the kernel, performs inventory and additional hardware specific platform "parts or bus initialization", and any plug and play autodetect and addtional device driver loading and initialization and then begins to provide feedback to the end user via the default local console.. a serial port or vga monitor.. then turns over control to a windows "manager" and presents a logon screen.. those are the general steps of most "windows like" systems.. be they microsoft, apple or linux.

GPT has a multiply redundant, "fake" MBR partitioning system for use for storage devives larger than 2TB.

A fake MBR is created at the top of the drive for marking the drive with a serial number and to indicate to old style BIOS boot systems that the drive is in use and contains information it can't see.. or that it is at least not "blank" and indicates caution should be exercised if the BIOS cannot further scan the storage device.

GPT has multiple copies of its mini-Index system for listing partitions on the drive, at the Top, Middle and Bottom of the drive. If one becomes damaged the others can be used to detect the damage and also wage an election to determine a quorum or tribunal of which contains the accurate information without having to perform extensive bitwise parity reconstruction of the data. Like MBR the paritions can be marked with a partition "type" for MBR that is simply "active or not active", but for GPT its more extensive, more generally one parition will be marked as an EFI - ESP - Extensible Firmware Partition, with a "hexidecimal type code" which is also a FAT32 filesytem format partition intended to contain mini-32 bit programs the UEFI can load into memory and execute, be they "C" programs for performing diagnostics, maintenance or bootstraping an operating system.

Since they are 32 bit prorgams they can have longer and larger sector registers in memory to seek deeper into an LBA drive and aren't limited by the MBR-LBA limit of 2 TB on storage devices with 512, 2048 or 4096 byte sectors. Some later 16 BIOS could work with larger byte sector discs but not all.

More complex 32 bit programs than were possible with 16 bit programs in a bootsector are possible. While MBR could load overlay programs to do something similar, few hard drive manufacturers released the hardware specific details to make this possible and moving the ability into programs loaded by UEFI made it easier to be more broadly accepted.

.. to be continued

8/19/2018

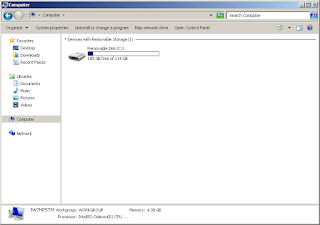

Win2Go Win7 on an HP Stream G2, booting from UEFI, GPT, NTFS

Your not supposed to be able to this, for several reasons.

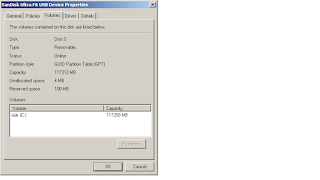

First Windows 7 up through Windows 10-1703 would "recognize" a USB Flash drive as type "Removable" and (A) Prevent creating Multiple Partitions - so no seperate EFI partition was possible (B) if Multiple partitions were somehow created on another operating system and present, it would enumerate with a drive letter only the "First" Primary partition it found with a supported file system, or the "First" logical partition if no others were found - Since Windows always created three to four partitions in the order - EFI, WinRE, Boot/System, Recovery - the Boot/System would never be enumerated and thus setup files could not be copied to the partition.

Certain [very expensive] "Certified for Windows To Go Win8, Win10" USB flash drives for the elite editions - could have their Removable RMB "bit" set in software to "announce" themselves as disc type "Fixed" - which would allow on Win8 or Win10 to create special Windows-To-Go bootable discs.

And if you actually did have a special certified disc and but not a special edition of Win8 or Win10 - it would refuse to continue during Windows setup at the point when you had answered all of the prior interview questions and committed to install - The message would say USB and IEEE1394 connected devices were not supported for install.. this includes SSD or external hard drives connected by a USB or Firewire connection.

This is a lot of barriers stacked against even the possibility of making this happen.

Most bootable USB Windows To Go, builder tools generally can't work around all these barriers.. some "will" partition the whole drive as type disc MBR and format one partition as FAT32 and copy a SysPrep style unattended setup.. to install Windows 7 regardless of the barrier to not install Windows 7 on a USB or Firewire disc.. it gets around this by "by-passing" the "guided interview" step that allows the Setup program to detect its an unsupported device and halts the setup.

Then you would have to have a BIOS that supports USB 2.0 booting from an MBR disc and has Legacy CSM and can boot Unsecure (unsigned) kernels like Windows 7 - which this type of computer all but disappeared after 2012 when USB 3.0 ports became the norm on nearly all platforms seeking to support Windows 8.0 - Windows 7 does not come with in box device drivers, and certainly not Boot time enabled "signed" device drivers for USB 3.0 ports - without a boot time device driver to power up and enable the port and enumerate and preset any attached USB device, the storage for the Boot/System volume is simply not available to the Windows kernel and start up would halt.

You can auto install a selection of USB 3.0 and NVMe, ACHI device drivers into all of the boot.wim and install.wim "clg" or class group (editions or versions) on a multi-install media ISO and build an MBR bootable SysPrep installer.. but different USB 3.0 hardware controllers exist - for those you can use the dism.exe tool to manually /forceunsigned install more drivers which will prevent a STOP 0xc0..07B error.

You [can] rearrange and partition two partitions on an uncertified USB flash drive, so a native EFI-FAT32 partition exists.. which a BIOS looking for a GPT partitioned disc [can] find and offer to auto boot - by placing the NTFS partition [before] the EFI rather than [after] which is the "norm" with the Windows Setup partition creation step (and rather un-intuitive) the BIOS UEFI-GPT-FAT32 routine can find the EFI partition by its format type (FAT32) even on a large GPT disc quickly and find the UEFI bootloader and BCD store that directs it to the NTFS partition and loads the USB 3.0 device drivers boot before kernel start-type.. which loads the USB 3.0 device drivers into memory before the windows kernel.. making the USB 3.0 ports live and the devices attached to them accessible for fetching the remainder of the Windows System.. bootstrapping the SysPrep installer and completing the normal boot Windows installation.

You can take advantage of those [pre-existing] NTFS then EFI partitions to make a Boot and System partition with bootable SysPrep image for Windows 7 and enable complete setup and install.

That seems a lot of balls to juggle.

But this has Usefulness beyond this "one application" it means that USB 3.0 devices other than flash drives attached to USB 3.0 ports can be used as installation targets.. including USB Fixed HDD drives - which are normally excluded from the Setup program as a target, and Firewire attached drives. External SSD and IEEE 1394 devices are generally faster more reliable and easier to find than a high capacity USB flash drive which might wear out sooner than later. - But with 3 and 5 year Flash Drive warranties.. its mostly a personal file backup hygiene issue.. Backup4Sure is a really good folder to zip container automated backup tool which is really great for this purpose.

In old Apple terms this is a [Target Mode] boot capability for all Windows (versions) allowing many recovery and backup scenarios, or forensic and diagnostic modes. On current and UEFI only hardware. CSM and GOP boot gates are still a minor nusiance.. but can be circumvented to permit pure UEFI environments to also work the same way.

Ultimately this all boils down to a simple setup procedure.

The maintenance and adding of device drivers now depends on one command line command for adding new device drivers to the offline image while the USB drive is plugged into a Win7 or higher computer and its mounted as a plain file storage device.

No .wim files, No .vhd or .vhdx images or ISO images are used on the USB flash drive, they are all plain and simple NTFS file system files.. and thus are not limited by any 4 GB file system limits as might be imposed by using a FAT32 file system for the flash drive. Any file can be updated on another system or copied and replaced at any time.

Its a very flexible and low maintenance.. and very 'familiar' tech support style approaching that which was available with DOS FAT discs many years ago. - Even SSD portable drives were excluded from being used as "bootable" Windows To Go devices with Windows 7.

I used a [ SanDisk Ultra Fit USB 3.0 - 128 GB - 150 MB/s - Flash Drive ] for this which is roomy and fast, but it should work with many other styles and types of USB 3.0 media. The onboard eMMC flash on the HP Stream G2 was undetected and inaccessible.. but is inexpensive and hard to replace or upgrade storage.. it also holds a copy of Windows 10.. it can be used as a backup method of booting the netbook.. and backing up or copying files to the USB 3.0 flash drive when the Windows 10 operating system is taking the lead as the "Online" operating system. It can fully see and enumerate the NTFS partition on the flash drive and natively mount and read write the NTFS files on it. For some reason however.. the Windows 10 native boot is vastly slower than booting from the USB 3.0 flash drive.. and much much slower at shutdown.. performance wise I do not know if this is a failing in the Windows 10 operating system itself.. or whether it is due to the poorer performance of the onboard eMMC storage that is built into the netbook.

In any event it is quit a flexible and high performance upgrade to downgrade from Windows 10 to Windows 7 and boot from the USB 3.0 flash drive.

8/14/2018

How to Boot, Win7 on Braswell N3050 - HP Stream 11 G2

First a little background.

I like the Intel Atom series of netbooks, they are fast targeted and really light on battery power, they tend to last all day and were made with some really up to date tech until Windows 10. The latter generations included Windows 8 or Windows 10 "exclusive" models with eMMC memory for boot media.

The problem is eMMC is it is low tech and non-standard as in its usually bonded directly in a random fashion on a netbook or phone such that where and how to access it is unpredictable and depends mostly on a vendor manufacturer to provide a custom device driver for the item.

Windows 8 did come with eMMC drivers, and netbooks did come with a Bios or UEFI boot manager which could pick up the threads and load the OS into memory.. but it was "very slow" and prone to wear and nothing like NAND or Flash memory, or an SSD. Typically eMMC is used for storing photographs and has no controller and its just awful in general. It is better off ignored. And when eMMC burns out it can't be replaced.

Later versions of the netbooks included a "standard" USB 3.0 port or all USB 3.0 ports.. which is a lot faster than USB 2.0 Then "Windows To Go" was introduced for Windows 8.. but not Windows 7. And Windows 7 does not have bootable USB 3.0 device drivers.

The "trick" however is to create a "Windows To Go" like USB 3.0 install of Windows 7 on a USB 3.0 or USB 2.0 class flash drive and boot from that.

"Windows To Go" is not like a bootable LiveCD or ISO and doesn't entirely run from a ramdisk in memory, so effectively it is a full resource operating system running from a fast.. not quite SSD flash drive. And since USB 3.1 flash drives are available, even with their own SSD controllers external to the netbook, they can be even faster than the eMMC built into the laptop. The external accessiblity of the flash drive also makes it replaceable / repairable should something happen to the boot media.. and at 128 GB or 256 GB or beyond the hdd of the netbook becomes near infinite.

Samsung, SanDisk, Kingston and Crucial offer some nice "Low Profile" USB flash drives in 128 or 256 GB capacities which barely rise out of the Left side of the laptop and provide a suitable boot target.

In my case I used a microSD card in a chip sleeve to mount it in the USB 3.0 port. The HP Stream 11 does have a microSD slot, but the Bios is unable to boot from it directly.. also though the UEFI boot manager "might" if used in non Legacy mode. That however is research for anonther day. Besides, leaving the SD slot exposed means even more media diversity. There are two USB 3.0 ports, one microSD port and one HDMI port.. sacrificing one USB 3.0 port for a "standard" boot drive keeps many options open.

AOMEI Partition Assistant Standard Demo / Freeware includes a "Windows To Go" creator feature for Windows 7, 8 and 10 independent of the tools available in Windows 8 or Windows 10. It requires a bootable USB target which must already have been formatted with exFAT or ntfs in order to be detected. It can't see the USB drive if its not formatted.. it will wipe and reformat the drive while prepping for the W2G install, but the prerequisite is a quickformat must already have been performed just so the target can be detected.

Once it is detected and selected an ISO or WIM image must be selected as the "source" of the install files. Its important to note that while ISO is "convenient" many ISO images contain more than one Install.wim file source and AOMEI will only use the first one found. Thus for a generic Windows 7 ISO it will install "Windows 7 Home Basic" which may not be the version / edition that was actually desired. Extracting the specific (Install.wim) file for the version of Windows desired using 7Zip or Dism will allow choosing the specific windows version / edition to create W2G media.

Next executing the create function will mount and (very) slowly create the W2G installation, note it is not creating a "boot image" but instead actually going through all the steps of formatting the media and mounting the install .wim file and extracting the files to build a 'Panther SysPrep' preboot installer / WinPE image on the W2G usb boot media.. it can take an hour or longer. The Progress bar will creep along and reach 00:00 minutes left and then Pause for a good while as it completes the SysPrep image and dismounts the install .wim image all of which take a good deal of time (after) all the files have been copied and the Progress meter reaches 00:00 -- it will produce a seperate Pop-Up window that says "Finished" when done and then "Revert" to ( go again.. do not do this.. you are done..)

Once this is done the boot media is complete, but (will not work) on HP Stream 11 G2 Braswell, (until) additional drivers are installed into the "Offline" windows install on the USB flash drive.

The reason is the Braswell generation has (only USB 3.0 ports) there are no USB 2.0 ports and Windows 7 cannot load an [On Demand USB 3.0 driver] during install or after install in order to retrieve its other Windows operating system files.

Braswell however was made by Intel and they made a NUC Kit NUC5PPYH which used the chipset, and included a USB 3.0 device driver.. as well as Intel Graphics Driver, both will be needed.. but the USB 3.0 is the most important for completing a successful boot.

USB_3.0_Win7_64_4.0.0.36.zip

GFX_Win7_8.1_10_64_15.40.34.4624.zip

Before installing the drivers to the USB flash drive in an "Offline" fashion.

They must be extracted and just the drivers isolated and placed in a directory which can be recursively scanned and then applied to the Offline SysPrep install on the flash drive.

Do this by openning the, with 7Zip and copying:

USB 3.0 driver

\HCSwitch

\Win7

folders to

C:\wim\usb3

then

Plug the USB microSD card into a slot and make sure it shows up as E:\

then

"Remove" the x86 version directories from \HCSwitch and \Win7 (recursion will scan folders and try to install all drivers)

then

"Edit"

C:\wim\usb3\Win7\x64\iusb3hub.inf

C:\wim\usb3\Win7\x64\iusb3xhc.inf

"Seek out" and change (from 3 or OnDemand) the StartType to (0) to make them "boot drivers" that must be loaded by the windows bootloader into active memory before turning over control to the kernel

C:\wim\usb3\Win7\x64\iusb3hub.inf

-------------------------------------

[IUsb3HubServiceInstall]

DisplayName = %iusb3hub.SvcDesc%

ServiceType = 1

StartType = 0

C:\wim\usb3\Win7\x64\iusb3xhc.inf

-------------------------------------

[IUsb3XhcModelServiceInstall]

DisplayName = %iusb3xhc.SvcDesc%

ServiceType = 1

StartType = 0

Then add the drivers to the "Offline" SysPrep Installation (since the folders on the USB drive are not in a WIM file you do not have to mount the WIM file first, simply target the E:\ drive).

The switching from an "On Demand" driver to a "Boot" driver startup type will throw an Error if you don't "forceunsigned" add them, because Normal boot drivers are "boot signed"

If you "/forceunsigned" install the drivers, then they will go ahead an install.

If you "/Recurse" install, the Dism command will search all the folders and subfolders for ".inf" installation instruction files (in-struction f-iles = in-f = .inf "files")

C:\>dism /Image:E:\ /Add-Driver /Driver:C:\wim\usb3 /forceunsigned /Recurse

Installing the Graphics driver is slightly different.

Begin by extracting only the

\Graphics

folder to

C:\wim\Graphics

The Windows system about to install the drivers will detect this as a "foreign" driver and automatically [Block] it from install, attempting to Dism install will result in failure.

The failure will be indicated in the E:\Windows\inf\setupapi.offline.log file

!!! flq: CopyFile: FAILED!

!!! flq: Error 5: Access is denied.

!!! flq: Error installing file (0x00000005)

!!! flq: Error 5: Access is denied.

! flq: SourceFile - 'C:\wim\Graphics\iglhxa64.vp'

flq: TempFile - 'E:\Windows\System32\DriverStore\FileRepository\igdlh64.inf_amd64_neutral_bfb4178e08406e39\SET893B.tmp'

! flq: TargetFile - 'E:\Windows\System32\DriverStore\FileRepository\igdlh64.inf_amd64_neutral_bfb4178e08406e39\iglhxa64.vp'

<<< [Exit status: FAILURE(0x00000005)]

In order to fix this, all the driver files must be [unblocked] which you can do one at a time, right-click Unblock.. but instead you can also use a [Sysinterals] "Streams64.exe" tool do so for the entire directory at one time.

Download

Streams v1.6

Extract Streams64.exe and place it someplace in the path like C:\Windows

This provides a guide:

How to bulk unblock files in Windows 7 or Server 2008

And finally apply the driver

C:\>dism /Image:E:\ /Add-Driver /Driver:C:\wim\Graphics /forceunsigned /Recurse

Deployment Image Servicing and Management tool

Version: 6.1.7600.16385

Image Version: 6.1.7600.16385

Searching for driver packages to install...

Found 1 driver package(s) to install.

Installing 1 of 1 - C:\wim\Graphics\igdlh64.inf: The driver package was successfully installed.

The operation completed successfully.

C:\>

First Install

Booting from the USB flash drive may require "catching" the Bios [Press 'ESC' and then Press F9] in order to select to boot from the USB drive rather than the eMMC -- I'm still working on a smoother way to do this.. but for now.. catch and redirect to the USB drive.

W2G on First introduction to a new system "Profiles" the hardware, and essentially performs a complete SysPrep to detect and configure the Operating System.

If the USB 3.0 drivers are Boot drivers the boot loader will load them into memory before turning control over to the boot kernel and the file system on the USB drive will remain available to the kernel and the install will proceed as a normal setup (for the First Time) on subsequent boots this will be skipped and it will just boot from the USB flash drive quickly as if it were a native HDD.

A single reboot will be necessary during which the Graphics driver and the User profiles will be setup and you will be logged into the desktop. Depending on the original [ Install.wim] file used to create the W2G USB drive 'Activation' may require differnt keys and procedures in order to activate the operating system.

WiFi Networking and Audio

the Graphics driver also included directories with Audio drivers to drive the speakers, you could have copied those and installed them at the same time as the Graphics driver or install them post setup

the WiFi networking for the HP Stream 11 G2 requires

AC-7265 driver set

additional device drivers for the NUC can be found here

NUC5CPYH

7/15/2018

Boot Win7 from eMMC flash, a different approach

One of the reasons a Thin PC is chosen is the low cost and that means cheap memory, eMMC.. and since that didn't standardize until late Windows 8.1 days there wasn't a common denominator to produce a common eMMC driver for boot or normal access.

However there have been LiveCD approaches since Make_PE3 and WinBuilder that booted XP or Win7 from Ramdisk space. Essentially eMMC isn't great for Read and Write cycles and really needs to be cared for by the Operating System or will prematurely burn-out. Windows XP and Windows 7 did not have a great TRIM command for supporting SSD and certainly didn't support eMMC at all. So booting from a ramdisk and "staying" in Ramdisk space will lengthen the life span of a eMMC boot device. Ram is designed for R/W and should last a normal lifespan.

Also there is the updates and virus angle to consider.. Updates are brutal on OS stability and long term they fragment and degenerate an OS until the entire Operating System has to be upgraded fresh.

Its the data that is transient and needs to be copied from one install to the next.

Viruses and malware may insert themselves into boot media and hdd images, but can only temporarily infect or corrupt data while a LiveCD is in memory.. upon reboot the virus or malware will be wiped clean.. and is the strategy of steady state, or powerwash pursued by Microsoft and Google at this time. In a portable device.. this reboot cycle can be quite often and narrow the window of time in which a virus or malware has in which to operate.. and if its airgapped.. narrow it to approximately zero.

Thus one alternative to booting off eMMC directly, is to use Linux grub to boot a LiveCD image of XP or Windows 7 into memory and run exclusively in RAM space and be unable to touch eMMC sd space.. effectively relegating the device into a power compute module which needs supplemental storage.. be that cloud or usb disk space.. which can be attached to after the LiveCD boot.

Turning a disadvantage into an advantage.. and also lessening the urgency of Updates.. until a stellar era in which virus and malware can infect near "instantaneously" upon joining the Net.

6/19/2018

Expandable, Modular, Repairable - Component, HDMI video recorder

Up to HD 2K game capture latency has driven the demand for HDMI splitters, which sometimes didn't implement HDCP copy protection, so any HDMI card could be used to capture HD if caught off a splitter, but it wasn't by design and export controls actively work to find and drive those out of business as quickly as possible. Since HD 4K its my understanding that loop hole has been plugged.

Leaving Component input on legacy DVD recorders and some PC capture cards. The quality of legacy DVD recorders with Component input were poor and didn't really suit their purpose, SD video capture. It was overkill bandwdith wise and the cables costs more going from three cables for audio+composite to five cables for audio+component. Only Laser disc could really use the bandwidth and was a niche market.

And that comes to today and the home theatre pc market, also vanishing.. but more from archival apathy.. and an over abundance of trust in the cloud and belief that Copyright will be offset by Lifetime viewer rights.. tho.. if content owners could selectively erase human memories.. I think they would be overjoyed.

IMO.. magewell makes a very nice "tunerless" capture card that works with windows, linux and mac in PCIe and USB form.. it has a well defined built-in full frame TBC, DNR and Y/C comb filter with proc-amp.. full retail is around 300 usd.. ebay sometimes 100 usd. The drivers adopt the most popular api for each platform so it works with virtually any software. But being "tunerless" its not exactly on the typical home theater pc enthusiast radar.. its more "archivist" or content collector targeted.

What made the DVD recorder especially useful in my opinion was the remote, and simplicity of the task.. collect content, permit limited editing and burn to disc. Compressing and moving all those bits, even by ethernet was just too slow, and DVD-RAM never quite supplanted the write-once and done DVD-R backup.

Finding that simplicity on a pc is very difficult, unless you walk a fine line and don't try to complicate things.

The single simplest, familar interface on the pc for manipulating video content is Windows Media Center, deprecated in 2010 its increasingly hard to find.. so it is itself becoming "legacy". However, at least on Windows 7, until 2020 it is still under support and somewhat accessible.. Windows Media Center has a partner remote, and can record "live content" from a tunerless input card if it detects an RC6 WME ir blaster. these get cataloged into its library and can be added to a playlist and burned to DVD and in theory Blu-ray.

That's the theory anyway.. and I'm pursuing it as quickly as I can to confirm.

I really like DVD recorders.. some of the last ones are all linux based and have a lot of upgrade potential.. upgrading to HDMI or component input and blu-ray may be possible, someday, but their time post-burner phase has not yet come. They are too valuable as they are for the moment to the people spending a lot of money for them on the secondary market.

A lot of the lessons learned about VCRs with DNR, line TBC vs frame TBC and frame synchronizers, IRE, proc-amps and more are still applicable to an expandable, modular, repairable - Component or HDMI recorder.. doesn't help with the EPG or Tuner problems.. but for the archivist little is lost from a skills perspective.

ps. One thing to note about Component vs HDMI recording is that there is no known Copy Protection signal mitigation for false positives readily available. In the past Video Filters or something like a Grex could be used to silence the inaccurate signal degredation, whether on purpose or by accident or the result of noise.

" it is also beyond my knowledge to even know if the macrovision I, II signals that effect VBI effectively could be blocked because Components R,G,B is digitial and not analog.. however there are other levels of macrovision and CGMS flags as well now.. and Components digitizing chips recognize and honor these".

A popular method might be to use a Component to S-Video converter, that then runs through an S-Video Copy Protection mitigator, then back through an S-Video to Component converter.. but this reduces the value proposition of using Component by also causing picture quality degredation.

Also Component did not have a WSS or Wide Screen Signal "flag" procedural "standard" for advising the display device when a signal was being output in an anamorphic format (tall and skinny) that "should" be displayed on a widescreen display in an expanded pixel morphic aspect ratio. While one could be added "later" after capture, or with a specific "in-line" box for this purpose it was not "the norm" and complicated the use of Component out from sources or Set Top Boxes capable of outputing an anamorphic widescreen signal. In the beginning this wasn't much of an issue, but as DVD content became increasingly anamorphic and some cable channels would switch between 4:3 and 16:9 it has become an annoyance.

HDMI generally avoided most of the WSS problems by properly supporting it, and since the Copy Protection mitigation was a result of an oversight for lower resolution 2K signal splitter devices with low latency for game play and game recording.. temporarily at least.. HDMI has some advantages over Component recording.

6/07/2018

Waveform and Vectorscope, Bar signal on a Pedestal

I bought a Leader 5860c Waveform Monitor and 5850c Vectorscope from 1989 last weekend. Setting them up was a challenge.. this is that story.

The Waveform Monitor wasn't as much of a challenge.

Basically it has BNC composite inputs, and I had to get some adapters for my composite cables to convert them over and connect a VCR and a Time Base Corrector to its Input.

The Time Base Corrector could also serve up a 75% Color Bar signal.. which could produce the usual Stair Steps seen in so many old black and white photographs. That also let me find and recognize the dual side by side field 1 and field 2 "humps" and the full frontporch and backporch of each in the center. Along with the IRE (set-up) or Pedestal add signal that picks up the Black level in North American Video signals and "sets it" on a Pedestal just above the sync blanking level.

Even though I "sort of had guidance from a PDF manual" it was for the wrong vintage and kind of vague about terms and very short.

I learned I had to DC restore the signal to keep it from drifting up and down because the signal is by default coupled to the Input as an AC signal about a sync level used to represent the center point of the overall video signal. The Monitor had a simple button for DC restore and focused the signal on its reference point.

I could then move the signal up and down and left and right with some alignment controls, and rotate the "horizontal level" of the scan using a small tool and a trimmer in the upper left hand of the monitors faceplate.

Scaling was automatic (or "Calibrated") or manual (or "Uncalibrated") when snapped into position the scale on the "graditule" represents the signal in terms of IRE units instead of voltages.

Good since most literature concerns itself with IRE units and not actual "voltage units".

I then put a SignVideo Proc-Amp into the signal path and played with its four controls

1. Black

2. Contrast

3. Saturation

4. Tint

The first two (Black and Contrast) allowed me to move the "floor" or blackest black level of the video signal relative to the "center point" sync refernce level. But that also had a slight effect on the top of the signal represented by the whitest "white" or brightest signal on the screen.

While the video signal on the monitor represents "Luma" or Brightness irrespective of Color.. each color bar has a declining "brightness" on purpose to create the stair steps. Left to right they fall off in perfect step with the bars on a normal video monitor.. but do not represent any color information.

This is exactly so that, the Black control only effects the overall video signal blackest black.

But after that adjusting the Contrast raises and lowers the top of the whitest or "brightest" color bar so that it could be set to IRE 100 .. or perhaps lower. IRE 75 is quoted as common, as are IRE 85 and 95 .. as a hedge against signal sources that may "overdrive" or "blow out" the perceived exposure.. loosing details in the "wash". This is called "clipping" and is to be avoided.

Clipping can also occur at the other end of the scale in the Blackest Black floor.. the goal is to keep tweaking to get most of the signal, most of the time to remain between these extremes.. which can depend upon the exact source used.. but the color bars serve as a first approximation and allow for some sand bagging of the range to protect against "clipping" at either extreme.

So the Waveform monitor is for calibrating or setting the "Black and the White" extremes of the signal using a proc-amp. And setting the Black also adjusts the height of the Pedestal for the Blackest black.. which in North America would be IRE 7.5 high (very important for the Vectorscope).

Next was the Vectorscope.

Its similarly easy to connect a video input signal, but it displays its results in a Polar or radial graph display. Magnitude is by Radius from the center, the other coordinate being an Angular value from a Color Burst reference signal.. not unlike the DC restore recovered "center sync" reference for the Waveform monitor..

And like that DC restoration.. the Vectorscope has to "recover" the Color Burst angle and decode the position of all colors from the signal arrayed in a circular fashion around the graditule or "scale" on the Vectorscope screen.

I made a mistake in seeking to set IRE to 0 for my video signal using a proc-amp to generate the Color bar signals. This caused the Vectorscope to "free wheel" or "spin" like a car drivers steering wheel.. or strobe like the struts on the wheels of a car. I couldn't get it to stop spinning, even using the phase angle adjustment control repreatedly.

Once I did try to switch IRE 7.5 (on) the bowtie pattern snapped on and stayed locked.

Also using a proc-amp as a color bar generator is not ideal.. in tiny fine print, it says you should also connect a video signal to the Input to the proc-amp composite input.. so that a "stable" color burst signal will be included with the color bars generated. This turned out to be true.

While acting as a bar generator the proc-amp cannot be used as a proc-amp, it locks all of its outputs to references.. presumably to act as a "standard" rather than a general purpose (much more expensive tool).

Radius of each bowtie, "spot" represents the relative "color saturation" for that color, as color video has a familar pallete with the bar pattern, each bar creates one spot in the general vacinity of the graditule regions labled for their color. Angular offset from the color burst frequency determines their "color".

So a second proc-amp can manipulate radius by increasing or reducing "Saturation" and this effects the entire constellation and over all "size" of the Bowtie.

While the same second proc-amp can manipulate "angle" by increaing or reducing "Tint" and this "Turns" whe whole orientation of the Bowtie. The optimum goal being to adjust or tweak out common imperfections that lead to a "cast" or "overall" color problem that effects all colors equally.

Individual colors which require specific tweaks to Saturation or Tint requires the use of a "color generator" or "color corrector" but is independent of the video signal itself.. that would be a manipulation to grant false "color" enhancement and isn't strictly a result of the signal path or current video signal regenerator. That would be more akin to using a "paint brush" to touch up a moving picture as opposed to "fixing" a video signal to be within specifications for broadcast. And is usually something more common in film and telecine to add special effects, or add to a scene to enhance a particular emotion or psychological setting than a strictly physical situation.

So I did notice that the arrangement of the proc-amp controls from Left to Right were not arbitrary, as each from

1. Black

2. Contrast

3. Saturation

4. Tint

tends to progress from that a person would notice the "most" if left uncorrected to the one they would notice the "least".

So in this case Black offsets are noticed first, then Contrast problems, followed by Saturation problems and then Tint problems.

----+-- Black (bottom)

Y - | - ----- waveform monitor

----+-- Contrast (top)

|

----+-- Saturation (radius)

C - | - ----- vectorscope

----+-- Tint (angle)

5/28/2018

Digitizing Analog VHS tapes - to AVI or to DVD

Among "digitizers".. people who want to transfer or convert their VHS tapes to PC files or DVD media there are two camps.

First are the professionals who know every detail about quality and quantity and do it as a business.

Second are the casual users of VHS tapes, who have not used them in years or infrequently and now find they want to perform this quickly and as a means to finally get rid of the tapes.

Among the first category are websites and forums that are mostly going quiet these days, occasionally helping one another to care for the equipment they are using to make these conversions, and answering few questions from "newbies" to the profession or hobbyist who happen to just be starting.

First its important to understand the last VCR was made in 2016 and the tapes are also no longer being made. Every year the tapes get older and degrade and these forgotten memories move closer to oblivion.

Of the remaining VCRs most are not well maintained or cared for and decay from misuse.. or become damaged from being plugged into fluxuating power lines and lightening strikes. If they don't end up being recycled or tossed in the dump.. they are given away.. and a very few end up on eBay or Amazon or Craigslist as "used".

Among the second category users generally start out with a combo VHS to DVD or some USB dongle to perform the "captures" and are sorely disappointed with their results.. they turn to the web and find the "prosumer or professional forums" and discover a new world of choice and information that tends to overwhelm.

There is also almost a "stages of grief" that sets in from the gradual understanding that what they were attempting has many levels of quality and generally the professionals tell them they've been doing everything the wrong way.. so they get their standards wound up and upgraded to "pure" and "archival quality".. seeking legenday and near mythical "unobtainium" in the role of VCRs with digitial noise filters and line and frame "Time Base Correctors"..

Eventually if they don't quit.. or run out of money and hope.. they discover the easier "MPEG2" path.. a lower bit rate and quality that for some is "good enough" and subscribes to a lower spec than "absolute perfection".

In or around 2003 to 2008 there was a fleeting moment in time when $500 to $1500 DVD recorders were "Staged" to replace the VCR as a means of copying broadcast television to DVD discs.

These could also be used to "capture" the VHS tapes being played back to DVD discs.

Unfortunately with success also comes the realization that the DVD could not hold as much per disc.. so people sought to edit out "commercials" or beginning and end credits for seasons of shows. Doing this by DVD recorder alone with no intermediary was "impossibly difficult".. enter the combo.. Hard Disk (HDD) and (DVD) recorder.. which could "Capture" even the longest tapes to its internal hard drive and let the user selectively edit and rearrange material and burn "title lists" to a single disc or break up the list and burn groupings of "title lists" to sequences of DVDs one after the other.

Great in theory and practice with a little experience.

But then all of the major makers of DVD recorders and HDD/DVD recorders disappeared one day.. and the remaining recorders aged and the DVD burners begain to wear out.

So people then looked towards settling for capturing DVD quality to PC files.. but the capture equipment usually (with a few exceptions) would not allow copying the large MPEG2 files used to create DVD discs to a PC.

Which then brings us to the Home Theater PC.. a complicated mix of presentation and workstation editing capability. Generally these are not designed with editing and archiving in mind and support is near non-existent. The standards unlike DVD or MPEG2 for DVD, rove all over the file type landscape and confuse to no end.. mastering or "authoring" a DVD from files captured to a HTPC is a soul crushing exercise.

A single maker of an HDD/DVD recorder lasted until 2017 "magnavox" and then mysteriously did not deliver a set of three new recorders in the last half of that year.. stranding many archivist with no way to finish their conversions.. or soldier on.

5/27/2018

fit-PC2i Atom 510 - Centos 6.9 i386

It comes from around the years 2008-2010

Many linux distros no longer support something so low power, or exotic.

However Centos 6.9 i386 will install on this device.

The IODD portable combo USB - CD/DVD rom drive emulator and simultaneous USB hard drive is a great way to boot quickly and switch between many ISO images. A special directory on the IODD is labeled ( _iso ) and in this directory .ISO images are placed.. a combo jogwheel and selection button on the side of the drive case allows scrolling between images inside this directory and "mounting them".. the selection is saved to a Fujitsu based microcontroller in the drive case and immediately this is presented as a USB attached CD/DVD rom drive with the selected image mounted as if it were an Optical disc.. no burning, no "actual" optical media needed.

Upon reboot the last selected disc image is automatically presented as a bootable device option. the drive case also simultaneously appears as a seperate USB hard drive, which is very convenient for offloading or onboarding files to and from an operating system that can mount the virtual attached optical drive and virtually attached usb hard drive.

The fit-PC2i has a half height microSD slot for flash media or four USB ports, two Type A and two microUSB ports.. and a drive slot for a SATA drive.

Unfortunately the support site recommended Ubuntu Desktop 8.04 as a boot option.. but this had a "Bug" in that if a SATA drive were installed, it would not be seen by the boot installer kernel.. frustrating to say the least.. the Centos 6.9 kernel correctly "sees" the SATA drive, and using advanced options during partitioning.. even allows checking off drives to use, or unchecking off drives to not use when installing the linux operating system on the device hard drive.

This is a metal case, passively cooled device.. so it generally gets "hot" and a USB powered external fan like the "AC Infinity" line up with inline speed control and rubber shock absorbers makes a very low cost and effective cooling solution.. and is very quiet.

The dual LAN ports make this Ice Cream sandwich sized server a fairly flexible platform.

Toshiba xs54, xs55 - Net Dub (copy) to PC

Net Dubbing is a term for Network Copying or "Duplicating.. hence Doubling.. or Dubbing" a rcording to another xs37 or other recorder.

As a hand held remote "workstation" for mastering and creating DVD recordings.. shuttling recordings before editing from one workstation to the other was taken into account as a desirable feature. The recordings are "not" transcoded but are at the same resolution as they were in when originally recorded.

PCs don't normally participate in Net Dubbing.. however they can with a simple protocol daemon that listens for a Netbios broadcast requesting XS recorders identify themselves with their Anonymous FTP server paths.

A simple systray windows application was created and released as Freeware. I modified the text labels for English and the result was a Virtual RD-XS recorder service for the PC.