12/30/2015

Fireshot Pro, How to revert to Lite after Trial

The Fireshot Pro 0.98.77 browser add-on has a problem on Firefox 41.0+ reverting to Lite if you decide not to license it on every machine you use. Here's how to fix that.

The add-on is a tool for archiving or capturing web content and then editing, saving or sharing it via more conventional means other than as url.

When installing the Fireshot add-on whether downloaded from the authors website, or through the feature Tools : Add-Ons > Add-ons Manager > Get Add-Ons

It "currently" installs as the FireShot Pro version in Trial mode.

You can also run into the problem if you already have it installed and "ever" clicked on the FS Pro! button after capturing, or use the menu option in the browser Tools : FireShot > Switch to Pro!

Once it is in Trial mode at the end of the 30 day trial it will begin tossing Pop Up messages that cannot be easily dismissed. They take you to a purchase page, however the mechanism there for reverting to a Lite edition appears to have some problems.

The add-on is a wonderful product and worth the cost, however it is limited per user and per users personal systems. If you need a copy on a co-workers personal computer, or family member who perhaps isn't going to use it as much. The Lite version is more than enough.

The fix is to close all open browser windows, open a regedit tool and "delete" the key string and value

HKEY_CURRENT_USER\Software\Screenshot Studio for Firefox\fx_prokey

Do not attempt to modify the value from "yes" to "no" it will not be enough. In fact after proving this doesn't work returning to the key will reveal it has been "set back" to "yes".

The entire "fx_prokey" key must be deleted.

Note: This is an evolving bug/problem. It can arise for a number of reasons and conflicts and interacts with various features of >= 41.0 of Firefox. So this "may work" for you.. or it may not, it may only be contributary. The author is aware of the issue and appears to be trying to debug and help those that run into this bug on the products forums.

Windows, How to firewall block a list of IP addresses

The method is not original, its described in many places. This was described in a posting here.

Step 1 - save the following to blockit.bat

@echo off

if "%1"=="list" (

netsh advfirewall firewall show rule Blockit | findstr RemoteIP

exit/b

)

:: Deleting existing block on ips

netsh advfirewall firewall delete rule name="Blockit"

:: Block new ips (while reading them from blockit.txt)

for /f %%i in (blockit.txt) do (

netsh advfirewall firewall add rule name="Blockit" protocol=any dir=in action=block remoteip=%%i

netsh advfirewall firewall add rule name="Blockit" protocol=any dir=out action=block remoteip=%%i

)

:: call this batch again with list to show the blocked IPs

call %0 list

Step 2 - save a list of IP addresses to blockit.txt

5.9.212.53

5.79.85.212

46.38.51.49

46.165.193.67

46.165.222.212

Step 3 - run the batchfile

a. [to read] blockit.txt and block ip addresses

c:\> blockit.bat blockit.txtb. [to list] the ip addresses currently blocked

c:\> blockit.bat listc. [to unblock] all of the ip addresses that were blocked

c:\> netsh advfirewall firewall delete rule name="Blockit"

12/19/2015

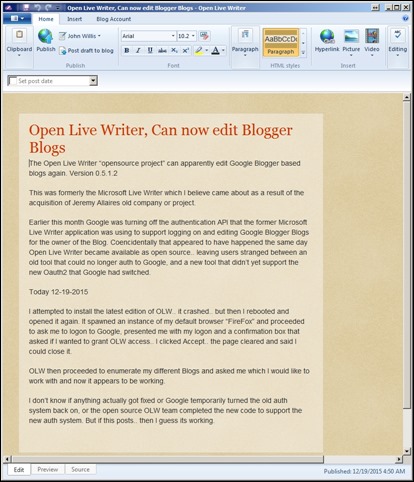

Open Live Writer, Can now edit Blogger Blogs

The Open Live Writer “opensource project” can apparently edit Google Blogger based blogs again. Version 0.5.1.2

This was formerly the Microsoft Live Writer which I believe came about as a result of the acquisition of Jeremy Allaires old company or project.

Earlier this month Google was turning off the authentication API that the former Microsoft Live Writer application was using to support logging on and editing Google Blogger Blogs for the owner of the Blog. Coencidentally that appeared to have happened the same day Open Live Writer became available as open source.. leaving users stranged between an old tool that could no longer auth to Google, and a new tool that didn’t yet support the new Oauth2 that Google had switched.

Today 12-19-2015

I attempted to install the latest edition of OLW.. it crashed.. but then I rebooted and opened it again. It spawned an instance of my default browser “FireFox” and proceeded to ask me to logon to Google, presented me with my logon and a confirmation box that asked if I wanted to grant OLW access.. I clicked Accept.. the page cleared and said I could close it.

OLW then proceeded to enumerate my different Blogs and asked me which I would like to work with and now it appears to be working.

I don’t know if anything actually got fixed or Google temporarily turned the old auth system back on, or the open source OLW team completed the new code to support the new auth system. But if this posts.. then I guess its working.

12/18/2015

Windows 7, Notepad++, fixing drag and drop, right click, default to open

Notepad++

Problem Unable to Drag and Drop

fundamentally this is a "security" problem

Fix - make sure it is not started [as Administrator] < this "Prevents" Drag and Drop Open File

Fix - open shortcut > Compatibility [tab] (uncheck) the box at the bottom Privlege Level:

[ Run this program as an administrator ]

Fix - open shortcut > Shortcut [tab] : Advanced (button) (uncheck) the box

[ Run as administrator ]

Problem Unable to Right Click, Open "with"

> Choose to open [txt] files in Notepad++ > Using right click (Open with)

(Notepad++ does not appear as a choice)

> Choose default program... > Recommended Programs >

(Notepad++ "selection" does not "stick")

> Choose default program... > Other Programs >

(Notepad++ does not appear)

> Choose default program... > Other Programs > Browse to > C:\Program Files (x86)\Notepad++\notepad++.exe

(Notepad++ does not work)

fundamentally this is a "missing" registry entry [program capable of..] registration issue, its managed by the program itself and isn't "set up automatically" during install

Fix - open Notepad++ : Settings : Preferences : File Associations : Supported Extensions : Notepad > "Select" .txt then [Move to >] Registered Extensions [box] then Close

11/21/2015

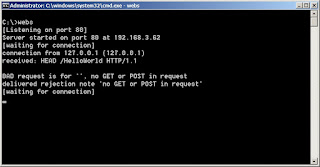

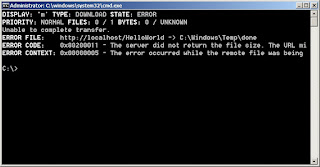

Windows, Batch File Internet calls

The windows batch command line language can make use of the BITS service to send a notice when its complete -- even a status message.

Since Windows 2000 there has been the BITS background downloader command. Called properly from the command line it can be used to retrieve/upload files, or simply perform an HTTP HEAD GET against a web service or server. It is scheduled to be deprecated, but still available in Windows 8. That covers a wide swath of windows versions 2000, XP, Vista, 7, 8 and their server versions.

Most Web servers record HTTP requests which includes the source IP address and the document that is being requested. This can be used as a default recorder for the messages.

Microscopic Web Server

Different versions of BITS behave differently.

Older versions may simply perform an HTTP GET

Newer versions perform an HTTP HEAD first, then a GET. On some web servers the reception of a HEAD request before a GET may produce an error on the server or the client if the 'out of order HEAD requesd method comes first.

What BITS is attempting to do is to determine if the previous download has completed and decide whether to resume that download or begin a new one. The BITS client may proceed to the GET stage, but the web server may have already closed the connection.

So with reservation, one should make sure the web server being used as the recorder for notifications can itself behave accordingly if the inital connection is from a version of BITS that preceeds a GET with a HEAD request. IIS will suport this... other web servers may simply record the request and wait for the following GET request, others may close the connection.

BITS will complete the request and return status information, which can be ignored. The real goal was to submit a one line informal message into the web server log in the form of a request which is logged.

This works well as a pseudo syslog service, or Internet enabled logging system.

Since the logs are "tagged" with the IP address of the remote system and any arbitrary strings in the request, multiple calls can be made from the batch script to essentially provide a one-way communications of lots of diagnostic information and filtered later to collate by IP, string or timestamp to reconstruct a running dialog with that batch file.

There is also the possibility of formally "uploading" a status report for processing later. Making this method even more robust. But really the goal for this demonstration is simply that a quick notification can be sent out by a windows batch script - without resorting to powershell, vbscript or other heavier methods.

The Windows Update and Microsoft Update patch system relies upon this service, so even if deprecated it will apparently continue to be available from a cmdlet within Powershell in the future. In which a single ps cmd could be issued from within a windows batch file to cover Windows 2000 through Windows 10.

11/09/2015

Plex, play EyeTV Content in Firefox without Flash

Plex playback in Firefox can be a problem. It stops and starts, stutters or doesn't play at all. Here's how to fix that.

First the Plex media server doesn't decide what the Transcode parameters will be for a stream, that is decided by the Player that requests a stream. If you are using the default Player type for Firefox 41.0.1 that will be a Flash media player. That browser plugin does not allow changing any parameters for the stream request, it is hard coded.

Google Chrome however defaults to the HTML5 player and gives you control over the stream parameters. This can be important if either the network bandwidth is over WiFi, the Plex server isn't up to the task of Transcoding at the bitrate requested or for any other reasons that a sustained high speed connection cannot be maintained.

Firefox does have the ability to playback using HTML5 but it must be manually configured to prefer it over the flash media player.

First go to about:config in Firefox and tap (dbl click) the media.mediasource.whitelist Preference to set that to "false", then tap (dbl click) the media.mediasource.webm.enabled Preference to set it to "true".

The default media player will now default to HTML5

Next

Go to your plex media server and pick a video to play and start to play it. Depending on many things you "may" have to kickstart the play while buffering by tapping on the "orange buffer ring".

The default "may" be Transcoded at the Original contents bit rate.. which "may" be too high for your Plex media server to transcode to a compatible format, or the network may not be able to sustain the bit rate.. or your browser host computer may not be able to "sustain" a smooth playback.

Regardless

Click the "equalizer" control icon in the upper right corner and pick some absurdly "low" bit rate.. such as 320 kbps. It is better to set the player to start at really low speeds and experiment to work your way back up for higher resolutions.

Note: When starting a new session at a different bitrate the Plex server will have to buffer the content at that new bitrate and "catchup" to where it was in order to "resume" the playback at a different bitrate.. the result can be while "testing" different bitrates the time to "restart" can seem inordinately long.. be patient and let it complete the bitrate transition. Once you have profiled and selected a "stable and sustainable" client bit rate it will remember it and all your playbacks going forward will begin at the new bitrate and won't take as long to start. The effort will be worth the smooth and seamless playback experience henceforth.

The brower will still "Scale" the content as the window is resized with whatever resolution the HTML5 media player is capable of producing from the stream.. at worst you'll get a "softened" slightly blurred effect that will probably improve with time or become generally unnoticable.

If your likely to resize the window to a smaller "postage stamp" or "rearview" window size on your desktop while conducting other work the stream will appear crisp and clear and the lower resolution and bitrate will be completely unnoticable.

Notice all the other controls the HTML5 media player also provides you with. The "impersona" icon allows you to select AAC or alternative sound tracks, pause, linear playback position control, sound and full screen controls are all available.

Finally you may wish to configure your Plex Media Web server/Player to make the Experimental HTML5 video player available, and Direct Play and Direct Stream without Transcoding if the media player knows how to handle the native video media/recorder formats.

Which drives to the next Tip!

The CoryKim EyeTV3 -- Export to Plex -- scripts from Github have been updated to support "No Trancoding" before moving them from EyeTV to the Plex media Archive for serving.

The initial reason is the next generation of SiliconDust HDHomeRun digital tuners now come with the option to encode the streams they are fetching over the airwaves as h.264 "natively".

But even if you don't have a new HDHomeRun digital tuner, disabling encoding on the Plex side means recorded shows will become available immediately after they are recorded from your Plex server and will be deleted from the EyeTV recordings folder. This is a big benefit if you just can't always wait, and you happen to be constrained on disk space or CPU capacity on the Plex server.. its especially useful on NAS class Plex servers with ARM processors or something low power.

Of course if the native format from the broadcasters is incompatible with the player.. it will still have to be transcoded before playing "on the fly" but if you are using the HTML5 player and can select a lower resolution image.. the CPU/network load will be low enough to make playback more than tolerable with very little effort on your part.

11/02/2015

Windows P2V, Post TCP/IP Reconfigurations

Typically a P2V will be created from a raw disk image capture or from the backup files of a live system. On first boot the Windows Plug-n-Play service will inventory the detected hardware and enable it with the drivers currently on the virtual machines hard disk. Any "missing" hardware will remain configured, but its device driver will not be started.

If the virtual machine environment "emulates" a hardware device for which the "on disk" image contains a compatible device driver, the new Virtual Machine will inventory the new hardware and automatically install the compatible device driver and proceed to "Enable" it.

For network interfaces this can be particularly problematic.

The new interface will not have been assigned a static TCP/IP address or default gateway, nor a DNS source. It will first attempt DHCP and if that fails will proceed to self configure itself with an Automatically Provisioned IP Address - APIPA. The Network Location Awareness - NLA features available since Windows Vista will then engage and proceed to "Test" the network in order to match it up to a "known" Network firewall profile {Domain, Private or Public}. And apply that default set of firewall rules to regulate allowed or blocked inbound and outbound TCP/IP traffic.

Then yet another new feature called Network Status Connectivity Indicator - NSCI will attempt to use the default gateway to contact a Microsoft Beacon site to prove or disprove the Network can be used to connect to the Internet.

DHCP

APIPA

NLA - with multiple profiling "tests"

NSCI

All this takes time and introduces lengthy delays when starting a new network interface on an unknown network, and even longer if the virtual network interface has been deliberately isolated from any other network.

It should also be mentioned with Windows Vista the TCP/IP stack was further "tuned" to discover the maxmium MTU transmission unit for a given connection and ramped up depending on the default selected agorithm and would also reset or ramp down if a connection failed to establish. This was called "autotuning" and can be changed from a dynamic to static behavior from from the netsh command prompt.

Additionally TCP/IP stacks can be offloaded onto dedicated hardware for certain chipsets, and jumbo packet support can influence both device driver, virtual machine and host network transfer rates.

Virtual technologies supporting shared physical hardware with virtual machines like [sr-iov] and hewlett packard "virtual connection" or systray tool for managing "binding" and "compositing" bonded network interfaces can help or conflict within new virtual machines. Interrupt Moderation or Throttling virtual machine interrupts for handling network interfaces is also another potential problem issue.

There are also alternative device drivers which can be introduced [after] first boot, which paravirtualize or "enlighten the device driver" that it is actually running in a virtual machine and can better cooperate with the Host to optimze network interface behavior. This is in contrast to "Full" virtualization in which all physical hardware is virtualize, or "Hardware assisted" virtualization in which the physical hardware participates in supporting virtualization independent of the guest operating system device driver being aware that it is being virtualized.

Many of the service features only really make sense on a mobile platform like a laptop, or on a client system on a fully configured host network. However they still exist on the Windows Server platforms and in general are difficult to resolve.

For one thing even if all of the timeouts are allowed to expire. Attempting to reconfigure the TCP/IP address of the new network interface with a previously used TCP/IP address, even from a network it is no longer connected will produce a warning that the TCP/IP address is currently "assigned" to a missing piece of hardware. Removing it from that piece of hardware is less than reliable even when following the instructions provided in the dialog box.. and then a complete reboot and expiration of all the timeouts will be required before any mistakes or missteps can be discovered. This can take upwards of 30 minutes or more!

The symptom of this long tale of first boot is that "Identifying Network" in the system tray appears to hang, and any attempt to open the [Network and Sharing Center] will produce a blank or non-responsive window, until all of the network interface self configuration steps have completed.

The way to resolve this problem is to:

A. Disable or "Disconnect the Cable" to the new network interface that will be created by the Host environment for the virtual machine before the new virtual machine is started. Then the network interface will not attempt DHCP, APIPA, NLA or NSCI and immediately open in the desktop environment for the logged in user (and) the [Network and Sharing Center] will be immediately available and responsive.

B. Boot first into a simplified environment in which services that may depend upon network connectivity are automatically disabled, or severely restricted. So that the Plug-n-Play service can "discover" the new network interface hardware and install and activate a device driver for it. Since it will be "unplugged" from the virtual machines point of view, it will not proceed to begin DHCP, APIPA, NLA or NCSI. After initial discovery and driver installation the Windows operating system will typically be required to restart to finish implementing the changes. If possible this is also a good time to disable any services that depend upon network connectivity until the new interface can be statically configured, since each of those services will then proceed to attempt to use network services and compound the start up problem by adding their timeouts to a Normal Startup.

C. On the next boot, into a reduced functionality environment. Use the ncsa.pl control applet, control netconnections or [Network and Sharing Center] wizard panel to access the new network interface and proceed to configure a static IPv4 address, gateway and DNS source. It is also recommended to configure a static IPv6 address, gateway and DNS source since many services prioritize IPv6 over IPv4 and must timeout in that layer before traveling back to IPv4 to begin opening up tcp and winsock services.

While much of this can be done by attaching and booting the Windows RE recovery environment from the original installation environment.

It can be made "far" easier by using a custom "Microsoft Desktop Optimization Pack - MDOP" feature called "DaRT - Diagnostics and Recovery Toolset" Software Assurance and Volume License customers have access to this.

The MDOP comes as an installable CD/DVD image iso with an autorun installer which can be used to install the [DaRT Recovery Image "Wizard"]. Running this Wizard helps create a user customized DaRT.iso > bootable CD/DVD iso or USB image which can then be used to start the virtual machine.

Booting the DaRT.iso image the system asks if the drive letters of the existing disk image should be mapped in a familar C:\ pattern, then lands at a "System Recovery Options" page, the option at the bottom of the list of system recovery tools > starts the DaRT toolset window.

The two most powerful tools are [Computer Management] and [Registry Editor].

[Computer Management] refers to the "offline' virtual machine image sitting on the virtual hard disk to which this bootable iso has been attached. Any actions in the Computer Management tool affect the contents on the actual offline virtual machine disk image.

Under this tool is access to the currently enabled device drivers and their startup type at boot time, which can be disabled, so as not to start.

This can be useful for drivers which are installed with applications to start at boot time and could cause additional problems. Disabling them here makes sure they will not start, and generally makes uninstalling them and their application package easier since no startup timeouts have to be endured and no shutdown procedure must be run to disable the driver after startup from within the operating system.

Also under this tool is access to the currently enabled services and their startup type at boot time, which can be disabled, so as not to start.

For similar reasons and for a speedier boot while finalizing the initial network configuration you may choose to disable various services.

[Registry Editor] refers to the "offline" virtual machine image registry on the virtual hard disk to which this bootable iso has been attached. Any actions in the RE tool affects the contents on the actual virtual machine.

Generally disabling the NSCI service from the registry is good.

Less agressive Domain network probing in the NLAsvc service can also be configured, but neutralizing the NSCI is usually sufficient.

Another feature of the DaRT "Wizard" is the ability to copy a folder of scripts and tools "into" the boot image that can be accessed from within this recovery environment, which can better automate and assist in finalizing the configuration of the virtual machine. One possible use is copy additional files onto from the recovery environment virtual disk image to the virtual machine hard disk and even set a script for the virtual machine to run on "first boot".

P2V – HP Proliant Support Pack Cleaner

Hewlett Packard ProLiant systems often have monitoring and alerting software and services which must be removed, a popular batch file is widely available that takes care of disabling and removing the services. It is only made faster and more effective by pre-booting into DaRT and disabling the associated services so they do not have a chance to hang the virtual machine. When the windows unistaller tool is used they are quickly remove.

P2V - GhostBuster Device Remover - commandline, task scheduler, UAC options

The "GhostBuster" driver script is also a widely used script for finding and automatically removing enabled hardware drivers for which no hardware is currently detected.

And the TCP/IP and Winsock stacks can be reset, or specific Automatic Tuning features disabled or further customized for the environment. Internet Options refering to unreachable SSL revocation lists and services, or windows update servers can be shut off or adjusted to use proxy services or a local cache.

10/22/2015

Nexus 5X, restore pre-Android 6.0 Mail, News & Weather

|

| Nexus 5X (2015-10-21) |

The News & Weather app version is version 1.3.01 (1301) called the "GenineWidget" it gets its network information from Weather.com and Google News. Both are stable sources of information so the app should continue to function, even if it is unsupported.

It comes from a forum discussion here Android Forums - Google News & Weather

The download link in that Thread is GenineWidget.apk 746.42 KB

To download it use a browser on the phone and then make sure the phone allows installing applications from "Unknown" sources and [Phone > Settings (gear icon) > Security > Unknown sources - "toggle to green"]

Then explore the download folder with something like "ES File Explorer" tap on the downloaded file and click "Install" to install it. You may have to uninstall the "native" app for News & Weather "first" but.. I had already removed the New appt as soon as I unboxed my phone. -- Its interface was unfamilar and I didn't have time to "re-learn" a new application I already relied on.

The old News & Weather app is very clean and contains no "Advertisements" the [Settings] ellipses (vertical dots) are located at the bottom of the News & Weather apps main window.

The old Email app [requires] the new Email "service" be disabled before the old Email "service" will install.

To do that you need to open [Phone > Settings (gear icon) > Apps > Settings (upper right corner veritcal dots)] and select the [Show system] (this is so that the "Google services" that normally do not show up in the Apps list, will show up.. then you can select the hidden Email service).

Scroll down and find one called "Email" press it and click "Disable" after its disabled uninstall and force stop will be disabled.

There are several repackaged Email apks for the old email client, many do not include "Microsoft Exchange" support. For example once they are installed, they only offer IMAP or POP email account support and no option for the Exchange email account support.

The old Email app is composed of [two] Android packages, once for the Exchange service and one for the Email application.

The one discussed here includes links to both pieces and is what worked for me:

AOSP E-mail (Last Active Version) | Nexus 6 | XDA Forums

To download the packages use a browser on the phone and then make sure the phone allows installing applications from "Unknown" sources and [Phone > Settings (gear icon) > Security > Unknown sources - "toggle to green"]

Then explore the download folder with something like "ES File Explorer" tap on the downloaded files and click "Install" to install it.

TWO: Important Tips!!

- The Exchange Services apk needs to be installed "first" (and it will produce an error message after installing) then the Email apk. It's okay.. it will still work.

- You will [Not] be able to click the "Install" button on the Screen!! To click it you will need to pair a Bluetooth keyboard.. like the Microsoft Bluetooth keyboard with Android support - Then hit the [tab] key to bounce from field to field (until) the [--INSTALL--] field lights up as having focus. Then hit the {enter} key and the install will proceed (this is true for both the Exchange Services.apk and the Email.apk).

IF you do not read this WARNING and do not know to [expect] the [--INSTALL--] button to not work from the phone screen, it will not install.

The download links are:

Exchange Services 6.2-1158763.apk - 1.10 MB

Email 6.3-1218562.apk - 5.73 MB

After the install the New Gmail app will continue to function as before, complete with "Roundy Icons" in the app. Just make sure you do not have an "Exchange" account enabled in the New Gmail app.. I removed a prior Exchange account I set up before, but since the new integrated Exchange email services are disabled [now], it would probably crash the new Gmail app. Just treat them as separate applications and find them in the Application Drawer and long press to put icons for them on the home page.

Finally if you prefer not to install a Third Party Launcher.

You can still change the wallpaper of the home screen to something more neutral to compliment the icons on the home page and make them more accessible than hidden.

I use one from the Play store called "wallpaper" by fiskur.

Activating it lets you select a color region and then "fine tune it" using your finger to create a swatch and then automatically apply it to the home page background by pressing the [ picture icon with a "+" ] in the upper right corner. [pic a hue on the vertical bar by touch, then swipe up and down on the main window to fine tune the actual color swatch to be used (the proposed color color swatch will surround the vertical bar and swipe pad in a luminiscent "glow")]

The result is when you return to your home page is the background has been changed to a solid color of your choosing.

The Nexus 5X is larger than the Nexus 5 by "one" icon row and "one" column.

There is also now a primary colored branding logo in the search box that has goofy kerning that says "Google" and the Microphone icon now has changing status colors with a scythe underneath its "neck".

10/19/2015

Cacti, SMTP (13) Permission denied

If you install a new instance of Cacti and can't send email, SELinux may be enabled.

# setenforcing 0# vi /etc/sysconfig/selinux

10/14/2015

Exchange 2010 EMS, fail, fail, connect

TIP!

If your Exchange Management Console PowerShell shortcut (EMS) opens and tries to connect to a CAS multiple times, fails, then retries and succeeds.

[Immediately] suspect 'cruft' from add-on modules in the IISAdmin > Content > Explorer for the virtual folder for PowerShell in [web.config]

In this case it was leftover from an uninstalled module for Advanced Logging applications.

Exchange apparently timesout trying to load the module, or has other quality problems with the module and fails, it returns and randomly can eventually 'silently' fail to load the module and continue to provide a prompt.

But this can very confusingly and maddeningly leave you wondering why its taking so long, and there are [no] Event, IIS Logs or any other indications of problems.

I've seen this exact same thing in other contexts.. lesson Exchange depends on IIS but the bridge is very tenuous.. neither side appears to support the other very well with diagnostics.

9/04/2015

Javax email, send hello error fix

javax depends on an environment variable to find the hostname when starting an SMTP msg hand-off to another server. The hostname can be set by a java developer so that it doesn't have to look it up, but a developer often skips or overlooks it. The method used to resolve the hostname between java and the os often breaks, especially in new linux distros (deprecation of network tools, refactoring of libraries, tossing things overboard). Under RHEL and Centos using the /etc/hosts file often fails if the hostname is appended to the end of along list of aliases, java only checks the first alias.

Fix:

make sure the hostname is placed at the front of a list of aliases in /etc/hosts

Example:

127.0.0.1 myhostname localhost localhost.localdomain

8/14/2015

Internet Explorer 11, updating the user interface

Internet Explorer 11 is difficult to use and difficult to customize. Here is a way to make it easier to use.

The usual methods of customizing the user interface that worked with previous generations of the Internet Explorer browser no longer work. A way to work around this is to make the best of the IE settings available and then disable the components that no longer allow customization, replacing them with an add-on extension.

One such extension is the Quero Toolbar it installs in a fairly confusing default state.

To reach the state as displayed in the first image of this article, first download and install Quero Toolbar then open IE11 and press ALT+Q to get to its configuration menu.

Examine each tab in turn and make sure they are setup as follows:

Tab1 - Settings

Tab2 - Ad Blocker

Tab3 - Appearance

Tab4 - Search Profiles

Tab5 - Security

Tab6 - Advanced

Then right click in the exposed toolbar area of IE11 and change the selcted options to match this:

The default navigational icons will not be the colorful ones in these images, to get those you will need to visit the Quero Themes page and download the Crystal Theme, it consists of one DLL which contains the icons, save it to a place like your Documents directory, then visit the Tab3 - Appearance tab from the Quero ALT+Q configuration menu and select the Theme by pressing the browse button at the bottom of the tab and selecting the Theme DLL.

All Browsers (IE, FF, Cr) have a hardware acceleration option. In general it attempts to offload some of the rendering to the local GPU.. in general it doesn't work very well and can cause problems and crashes.

If you would like to disable the hardware accelaration feature in IE11, it can be reached by left clicking on the 'gear' icon in the top right and selecting 'Internet Options' then the Advanced (tab) it will be the first item listed, check it and press [Apply] and then [OK]

The end result is a browser with a viewing and control profile very similar to what can be acheived in Firefox and Chrome.

Internet Explorer 11.0.9600.17959

Mozilla Firefox 40.0.2

Google Chrome 44.0.2403.155 m

note: while I am aware it is possible to go even further and make them virtually identical, this article was merely a quick demonstration of concept. current browsers are more similar than they are different in form and function, and regardless of their underlying differences can reach broader audiences by conforming to more common user interface elements.

8/11/2015

Windows 2008r2, Powershell resuming a Service

For example:

Start > Administrative Tools > Event Viewer

will start an instance of the Event Viewer discovery program focused on the (Local) binary logs

the categories down the vertical navigation panel to the Left generally indicate the source of a notification, Application (user), Security (kernel), System (system)

the levels in the central panel indicating "severity" more descriptive "source" and specific "event"

right-clicking an event and selecting "Attach Task To This Event"

will open a [Create Basic Task Wizard] window and create a Task Scheduler entry

the Action can be set to :

where the [Add arguments (optional):] field can be used to pass a 'horizonal' command line script

-command &{Start-Sleep -s 50; Restart-Service -displayname "StorageCraft ImageManger"}

in this case the script dictates to enter a sleep cycle for 50 seconds then peform a restart of a registered operating system service daemon with a displayname "StorageCraft ImageManager"

the actual "displaynames" of registered service processes can be obtained from a powershell prompt using the "get-service" command "let" or cmdlet, the displayname is not the same thing as the running process name

7/21/2015

Tomcat, err ssl version or cipher mismatch

When using Tomcat without a robust web server frontend ( like Apache or Nginx ) to manage SSL connections and sessions. Java keystore problems can produce several misleading error message in browsers. In addition to that, the imported certificate and private key used per website must have the same password/passphrase as the keystore itself and cannot be "blank".

The browser may display a cryptic error message and refuse to open an encrypted data channel using the certificate, resulting in an open http:// connection with the following message in the browser window:

ERR_SSL_VERSION_OR_CIPHER_MISMATCH

Keytool does not have a method for importing a third party signed certificate and its private key into a new keystore natively.

The Openssl toolkit can create a Microsoft PCKS12 format cert and private key pair.

The Java keytool tool can then be used to import/convert a PCKS12 storage container into a keystore and set the "keypass" and "storepass" at the same time.

For example, it is common in Red Hat Enterprise Linux to use the /etc/pki/tls directories and genkey utilities from the crypto-utils package to create PEM encoded private key and CSR pairs and receive a signed PEM format CER cert. This is the least friction, most common method known of obtaining SSL certs and is fairly well documented.

The following will produce a keystore that contains the private key and signed cert in a keystore with the private key and keystore pass set to the same value:

Since this keystore is intended to be used with Tomcat, the alias should be "tomcat"# cd /etc/pki/tls# openssl pkcs12 -export -in certs/www.server.com.cer -inkey private//www.server.com.key -out www.server.com.p12 -name tomcat -CAfile certs/ca_bundle.cer -caname root -chain# mv www.server.com.p12 /usr/share/jdk1.7.0/bin/ ; cd /usr/share/jdk1.7.0/bin/# keytool -importkeystore -deststorepass changeit! -destkeypass changeit! -destkeystore tomcat_java.keystore -srckeystore www.server.com.p12 -srcstoretype PKCS12 -srcstorepass changeit! -alias tomcat

Also since the server is now expected to provide the certificate chain, and in the correct order the following steps might be wise; It would be wise to also be aware that bundle files often come with multiple certs in one file and the keytool will silently discard and not import more than one certificate at a time, in which case if the file does contain multiple certificates breaking the file into one file per cert and importing them separately would be advised. The root of the chain possibly appearing as the "last" certificate in the bundled "chain file" and might need to be imported "first" in order to avoid problems with mobilty clients.

# keytool -import -trustcacerts -alias AddTrustExternalCARoot -file ca_bundle3.crt -keystore tomcat_java.keystore# keytool -import -trustcacerts -alias USERTrustRSACA -file ca_bundle2.crt -keystore tomcat_java.keystore# keytool -import -trustcacerts -alias RSAServerCA -file ca_bundle1.crt -keystore tomcat_java.keystore

And finally to address the ciphers problem.

The Tomcat connector ( server.xml ) needs to explicitly "tip toe" about known Common Vulnerabilities and Exploits (CVE)s, this may work like "magic" but the set is carefully crafted around a balance between known vulnerabilities, the capabilities of a jdk without enhanced cipher capabilties and what browsers will allow a connection to be formed with.

<Connector

port="443"

protocol="org.apache.coyote.http11.Http11NioProtocol"

maxThreads="150"

SSLEnabled="true"

scheme="https"

keystoreFile="/usr/share/jdk1.7.0/bin/tomcat_java.keystore"

keystorePass="changeit!"

secure="true"

clientAuth="false"

sslProtocol="TLS"

ciphers="Its best to consider carefully whether to forego using the "Free" wisdom and customs gathered and standardized by the more mature and currently very vital and active web server projects like Apache and Nginx. They are constantly being updated and repaired for Zero-Day attacks and exploits.

TLS_ECDHE_RSA_WITH_AES_128_CBC_SHA256,

TLS_ECDHE_RSA_WITH_AES_128_CBC_SHA,

TLS_RSA_WITH_AES_128_CBC_SHA256,

TLS_RSA_WITH_AES_128_CBC_SHA

"

/>

Java is a "language" and does not prioritize "web services" as a daily updated and maintained feature.. by "definition"

Tomcat is almost certainly "guaranteed" to be "Exploitable" the day it is released

To serve the useful purpose of an Example of "What Not To Do.." this has been widely and strongly publicized almost from its inception.

The position has not been changed, regardless of less experienced users promoting propaganda that "that's what they used to say.. it is still True Today .. it is still in the documentation" it speaks highly of programmer experience level to say otherwise.

The AJP and Mod_jk projects explicitly "exist" because of the Java libraries that support them, they are not token projects that serve merely as examples.. they are there for a reason.. whenever "bindings" between projects exist and are maintained for "generations" do be curious and suspicious of why the the High Level of effort is continually put in place to maintain them.. usually unused code would fail over time if it were not actively being used. There Is A Reason.. Be Curious !!

Finally, Java "is a Language" with many aspects that "look like" an operating system, its JVM is not as old or robust.. or as well maintained by "language developers" as dedicated "operating system developers". A person who mows your lawn does not often fix cars as well as they mow lawns.. please keep this analogy in mind.

If "language developers" were to ever devote the same level of energy, effort and accumulated expertise.. you would probably not appreciate the language as much as a language developed by dedicated "language developers" [ Expertise in one area, does not often translate into "Unrelated" Expertise in another area.. to assume so is usually disasterous ]

7/05/2015

RHEL 6, Installing Tomcat with Yum

The 'provides' tag was not added to subsequent newer versions of java as the gcj was gcj specific and is now for all intents and purposes retired. Sun, Oracle, IBM maintainers have no reason to add in this tag as a 'provides' and thus to satisfy the requirement pulls in the only java that satisfies it.. 1.5.0

There are ['ways'] of tricking the rpm dependency database into thinking it already has this requirement satisfied.. but they are not supported or approved by Red Hat.

Installing Tomcat without enabling the "Optional Subscription Channel" in RHN will result in a Tomcat service which has broken dependencies between java classes and java services in code written for the Tomcat service.

RHN Channel Subscription:

Subscribed Channels (Alter Channel Subscriptions)

[x] Red Hat Enterprise Linux Server (v.6 for x86_64)

|

+-> RHEL Server Optional (v.6 64-bit x86_64)

In particular the following package is only available from the "Optional Channel"

Pulling in Java 1.5.0 is suboptimal because Java 1.5.0 is no longer supported by its upstream provider.jakarta-taglibs-standard rhel-x86_64-server-optional-6

However Java 1.8.0 changed and removed many of the java classes in use by a large portion of the web service code in common use today (i.e. Hashmaps).

Therefore it is more desirable to install Java 1.6.0 or Java 1.7.0

However while this is possible (and because the Alternatives symlinking will result in Tomcat running on the designated version of Java once it is installed) java 1.5.0 cannot be removed with the YUM package manager without causing a catastrophic "unwinding" that removes all packages that have the java-gcj-compat requirement.

To paraphrase the most recent statement from bug 1175457

2015-05-12This bug is not fixed, however a workaround is provided for rhel7.The true fix will probably never go into rhel6

With these issues in mind the barest base install of Tomcat on RHEL 6.6 can be made with the command:

# yum groupinstall web-servletWhich will install the following:

tomcat6

tomcat6-el-2.1-api

tomcat6-jsp-2.1-api

tomcat6-lib

tomcat6-servlet-2.5-api

It will provide a webservice, but serve a completely [blank] page.

http://localhost:8080/

[ don't forget about - iptables and selinux ]

The following packages [will not be installed] but you may expect and wish to install them:

tomcat6-admin-webapps

tomcat6-docs-webapp

tomcat6-javadoc

tomcat6-log4j

tomcat6-webapps

One reason is that these other packages pull in even more dependencies for supporting the Example and Sample JSP and Servlet applications, which "people tend to install tomcat because they want to serve JSP and Servlet applications.."

If you install only the group web-servlet, your very secure.. but most of your JSP and Servlet apps will not work unless you selectively install supporting packaged versions of support software for JSP and Servlet apps. Kind of a knock on side-effect. Figuring out which packages contain those support services.. is "fun"

Upon enabling the Optional Channel and installing all of the packages, Tomcat behaves more like a freshly compiled source code distribution.

By installing an alternate version of java [before] the java_1.5.0 version is pulled in by a dependency (i.e. on a freshly installed server) the later version of java will take priority and keep the alternatives pointers fixed on the newer version of java [ alternatively the "alternatives" command can be used to manually adjust the pointers, however its just easier to install a different java version before 1.5.0 is pulled in ] [ the non-devel package will pull in only the java runtimes JRE, the "-devel" package will pull in the java development kit javac compiler JDK which could be useful ]

# yum install java-1.7.0-openjdk java-1.7.0-openjdk-devel

# yum install tomcat6 tomcat6-el-2.1-api tomcat6-jsp-2.1-api tomcat6-lib tomcat6-servlet-2.5-api tomcat6-admin-webapps tomcat6-docs-webapp tomcat6-javadoc tomcat6-log4j tomcat6-webappsThe APR, OpenSSL, JNI - libtcnative.so or tomcat-native component is apparently not available as a packaged option on RHEL6. While facility to accomodate it was made in the /etc/sysconfig/tomcat6 file under JAVA_OPTS= it was never packaged for the x86_64 architecture.

It can in theory be compiled and inserted into the /usr/lib64 path "since it is in fact a C" library. But then you run the risk of further maintenance addressing CVEs and other Security audits.

For RHEL7 and Tomcat 7.0 it appears libtcnative may be available as a packaged option.

EPEL and RHSCL also do not appear to offer much support for Tomcat6 on RHEL6.

JBoss however, if contracted and installed, does appear to have a libtcnative however that is not to my knowlege a generally supported option unless you are running that platform.

5/18/2015

Syncplicity, How to use with Shibboleth

A cloud hosted account is a signed in user who has the ability to initiate and maintain a file storage session for the purpose of conducting file sharing services over the EMC Syncplicity cloud based file sharing system. Participants who maintain a cloud account are not individually licensed but receive web browser delivered software agents that enable their internet connected workstation or mobile device to use EMC cloud storage to share files.

Customers of EMC often already have an authentication system such as Active Directory, and often use ADFS or an OASIS style SAML2 compatible Single Sign On service in order to avoid proliferation of account management issues and to allow members of their domain to remember only one set of logon credentials.

Shibboleth is a commonly used SAML complaint SSO.

This is an example with Shibboleth 2.4.2 on RHEL6 connected to a Windows 2008r2 Domain.

Images: within this document are Expandable by "clicking" on them to enhance readability.

On the EMC provided Management page for the Customer service site

- NOTE: When setting up SSO "the Management page" may log you out automatically after 30 minutes (before you are finished) . You can log back in regardless of whether SSO is enabled by going to the my.syncplicity.com website and "clicking" the triangle for Sign-in Help it will take you back to the Admin page for your subdomain account.

After signing into the EMC Management site you begin by choosing from the

Menu bar > Admin > Settings

Manage Settings

>Account Configuration

>>Custom Domain and single sign-on.

STEP 1

> Configuration Authentication Settings

>> Domain Settings (should already be filled out for you)

> Single Sign-On (SSO)

>> Single Sign-On Status*

"select" the radio button [Enabled] to un-grey the fields that follow

STEP 2

upload a PEM format X.509 copy of your Shibboleth Idp "internal" signing certificate:

[NOTE: This is the Idp "internal" cert used for signing SAML assertions. It is NOT the SSO Website certificate a users browser receives when they are directed to a logon page]

For example:

The SAML signing cert for Shibboleth is commonly placed here:

/opt/shibboleth-idp/credentials/idp.crt

Master Tip!

be sure to take a look at the [Current Certificate: ] information after it uploads

it is common for an Idp cert to reveal very little information

"too much" information could signal the wrong cert was uploaded

STEP 3

complete the rest of the fields that refer to the Shibboleth server hosted at your site

Provide the Identity provider (Entity Id) for the Shibboleth Idp website: < on your server >

Provide the Customer facing "Sign-in page" (SSO) url: < on your server >

Provide the Customer facing "Logout page" (SSO) url: < on your server >

The press [ Save Changes ] to save the settings.

note: these are "paths" to web services on your shibboleth server, they have to be setup on your shibboleth server to receive requests and provide answers; they can be mapped to different paths, you have to be sure to use the correct paths; these are provided as common examples

On the Shibboleth server

STEP 1

The EMC website will be "relying" on your Shibboleth server to provide "authentication".

For example:

The shibboleth relying-party file is commonly found here:

/opt/shibboleth-idp/conf/relying-party.xml

The file is in xml format and has a series of "chunks" or "sections" of information

rp:RelyingParty

Where id = "Service provider (SP id) for the EMC hosted website /sp"

Where provider = "Identity provider (Entity ID) for your shibboleth svr"

to save time and typing errors you can copy an existing section and modify it

Syncplicity needs to be added to this section

metadata:MetadataProvider

the Metadata that goes into the file

/opt/shibboleth-idp/metadata/emcshib.xml

has to come from EMC and is specific to your hosted service

here is what it looks like

the Metadata is a "contract" for how the Service Provider will communicate

it includes the SP id, the SAML protocol type, the SP x509 signing cert, what it expects to see used in the Subject line for communications (the "NameID") and how to contact the Service Provider when the Shibboleth server is done fullfilling the User login request

you can obtain it in one of three ways;

1. https://custdomain.syncplicity.com/Auth/ServiceProviderMetadata.aspx

wget https://customain.syncplicity.com/Auth/ServiceProviderMetadata.aspx -O emcshib.xml

curl https://custdomain.syncplicity.com/Auth/ServiceProviderMetadata.aspx > emcshib.xml

NOTE: this method currently retrieves metadata with a known problem

2. https://syncplicity.zendesk.com

sign up for a Syncplicity Support account and "open" a Support Ticket

NOTE: support will review the ticket and may take up to 1 day to respond

3. write it yourself

obtaining the Service Provider x509 cert is the only really hard part

you can fetch that from the URL for your hosted site in method # 1

STEP 2

The attributes to be returned to the service provider after a successful login need to be defined.

The required attributes need to be added to

/opt/shibboleth-idp/conf/attributes-resolver.xml

resolver:AttributeDefinition

a particular attribute is not required, but at least one must be capable of being turned into the "Name ID" for the conversation; and it must be tagged as being formatted as a

nameid-format:emailAddress

For example

we invent an attribute "EmcID" and fill it with the users mail address from an LDAP query to Active Directory for the authenticated user and tag it as being formatted as a

nameid-format:emailAddress

and SAML encode it with the SAML2StringNameID routine

the result will be when it comes time to pare down the attributes to be sent back to the EMC Syncplicity service provider, this attribute will be available

STEP 3

The attributes to be released need to be defined in the attribute-filter policy file.

The attributes to be released need to be added to

/opt/shibboleth-idp/conf/attributes-filter.xml

afp:AttributeFilterPolicy

an AttributeFilterPolicy applies to a particular Service Provider ID and declares what attributes are Permitted or Denied release, usually you will have more Deny rules than Permit rules

in a SAML2 based server its also important to filter out the "Transient ID" explicitly since it is by default Permitted and often unintentionally takes the place of the NameID

after all rules are applied to pare down the available attributes for a user, one is selected for the NameID and based on the Metadata from the Service Provider it uses an attribute that is in the format

nameid-format:emailAddress

Syncplicity will use this attribute to find the User Account on the hosted Service Provider site and log them in

STEP 4

It will take them to their familar Shibboleth SSO logon page for authentication.

NameID = nameid-format:emailAddress

matches a User Account created in the EMC management page for this site.

They will be allowed to initiate a Syncplicity file sharing session.

To be completely clear

Syncplicity "uses" an Email address as the UID or Unique Identifier for a Syncplicity user account in their system. Shibboleth must return a NameID in an email address format that Syncplicity can use to "find" a Syncplicity user account in the EMC system. If it fails to find a match the user may indeed succeed in logging in with their SSO credentials but Syncplicity will present an error screen indicating it could not match up their login with a Syncplicity user account.

It is also not enough to return or "assert" an attribute for a successfully logged in user, it must be mapped or used as the NameID for the SAML conversation. Syncplicity does not scan the attributes returned for "any" email address, it only looks at the NameID of the SAML conversation.

This is "why" it is so critical to make sure a Transient ID doesn't get accidentally mapped into the NameID. It is in the wrong format and will not in general be sourced from the mail attribute for a user.

You can tell from the log messages in the idp-process.log when detail is turned up, which released attribute is being "used" for the NameID. This is a terrific troubleshooting step.

Its even easier if you use a SAML tracer plugin on Firefox to "watch" what is used as the NameID in the conversation between the browser and Syncplicity when it returns from a successful SSO sign in.

Which released attribute will be picked for the NameID can seem a bit random in nature depending on the attributes available after all the attribute filters for that service provider have been applied.

TIP #1

The logging for Idp, OpenSAML, LDAP and Protocol messages can be increased in this file

/opt/shibboleth-idp/conf/logging.xml

After restart the tomcat Shibboleth process will record additional detail in

/opt/shibboleth-idp/logs/idp-access.log

/opt/shibboleth-idp/logs/idp-audit.log

/opt/shibboleth-idp/logs/idp-process.log

the ipd-process.log file will contain the most useful information.

these log files typically roll over at midnight local time.

Be sure to reduce the logging detail after troubleshooting.

TIP #2

The Firefox web browser has a SAML transaction decoder and monitoring tool [ Add-on ]

NOTE: works with Firefox version 29.0 and later "only"

After it is installed

> Tools

>> SAML tracer

will open a separate box and track all of the traffic within open browser windows

traffic identitied as encrypted and SAML encoded will be decrypted and decoded into human readable content (can you 'Spot' the <saml2:NameID > line?)

Be Careful when sharing screenshots of decrypted SAML sessions as there is often "sensitive" user information exchanged within the SAML transactions

TIP #3

Once SSO is enabled, only Users with accounts already set up in Syncplicity will be able to log into their Syncplicity accounts.

To be clear

If "End-users" merely visit the hosted portal site at <custom-domain>.syncplicity.com and try to login with their SSO credentials. They may indeed succeed in authenticating, but upon return from their SSO login page Syncplicity "will not" proceed with the "Self-signup" process. They will be denied access.

Setting up "End-user self-signup" is a completely separate Administrative task.

Once it is configured and enabled End-users will need a specially crafted "invitation URL" created for the Syncplicity hosted domain in order to begin the self-signup process.

The "invitation URL" is unique to the hosted site, but not to the End-user, so once it is created the same URL can be shared with everyone who is entitled within an organization to participate.